If you are building agent memory architectures today, you are probably building a tape recorder. You are capturing every message, every tool call, every response, and replaying it back into the context window the next time the agent runs. It works. Until it does not.

I hit the wall about six weeks ago. A support agent I had been building for an internal tool was performing beautifully in short sessions. Five or six exchanges, maybe ten. The agent remembered what the user had said, referenced earlier messages, and felt genuinely conversational. Then a tester ran a forty-message session. The agent started hallucinating details from message twelve. By message fifty, it was confidently referencing a database migration the user had never mentioned.

The context window had filled up. The model was drowning in tokens.

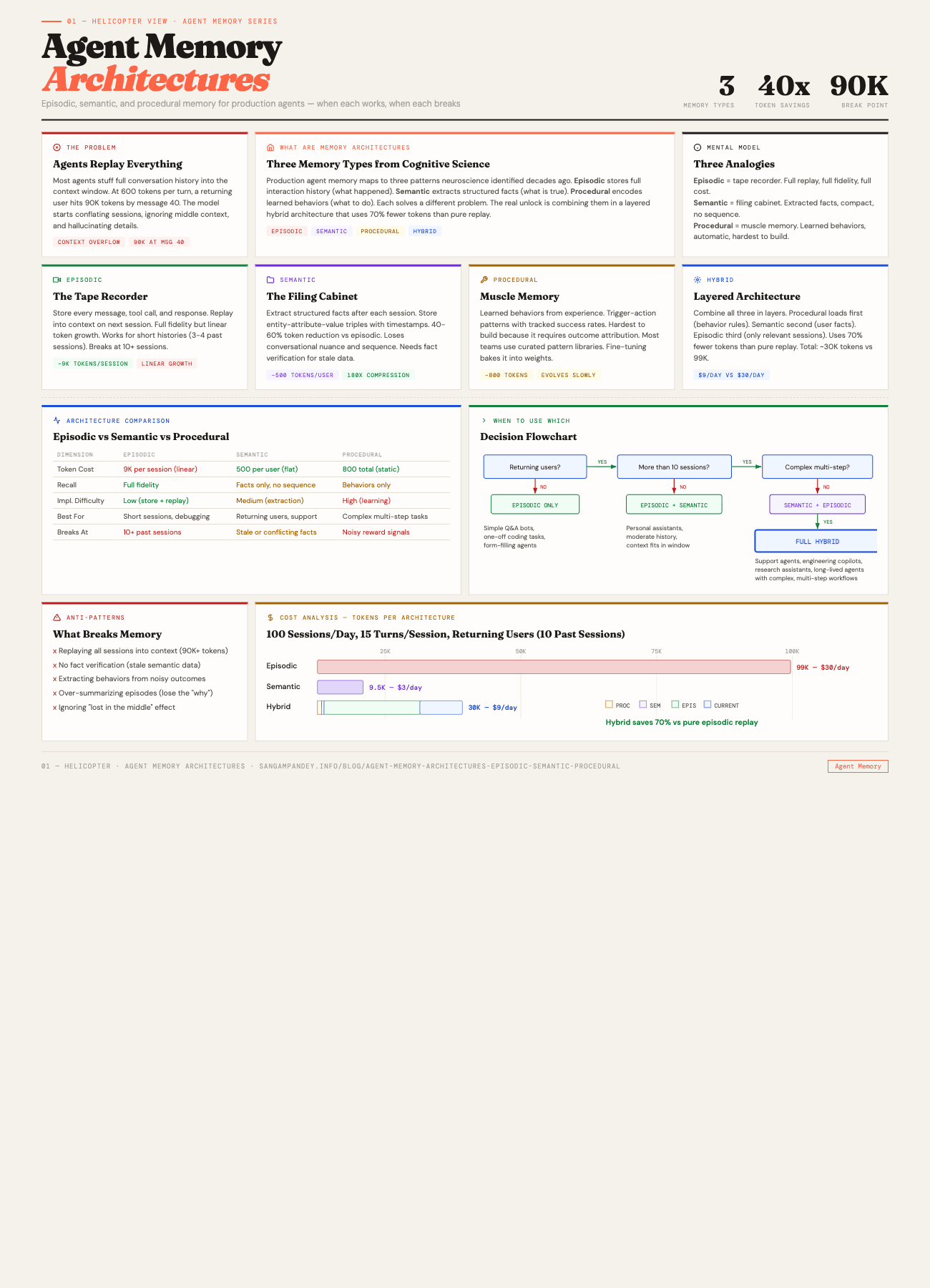

That failure sent me down a rabbit hole into how memory actually works, both in cognitive science and in production agent systems. What I found is that most teams building agents are reinventing one of three patterns that neuroscience identified decades ago. Episodic memory, semantic memory, and procedural memory. Each solves a different problem. Each breaks in a different way. And the real unlock is not picking one. It is knowing when to use which.

For a visual overview of all three memory architectures, see the Agent Memory Architectures infographic.

Episodic memory is a tape recorder. Semantic memory is a filing cabinet. Procedural memory is muscle memory. Understanding these three analogies is the fastest way to reason about which architecture your agent actually needs.

Table of Contents#

- How much does it cost to be stateless?

- What is episodic memory?

- When does episodic memory break?

- How does semantic memory reduce token costs?

- How to implement semantic memory

- When should you use procedural memory?

- Why is procedural memory the hardest to build?

- How do hybrid architectures combine all three?

- Practical implementation guide

- What does each memory architecture cost in tokens?

- How do you decide which memory architecture to use?

- FAQ

How Much Does It Cost to Be Stateless?#

Memory Architecture Comparison at a Glance#

| Dimension | Episodic | Semantic | Procedural |

|---|---|---|---|

| Token Cost | ~9,000 per session (linear growth) | ~500 per user (flat) | ~800 total (static) |

| Recall Accuracy | Full fidelity, exact replay | Facts only, no sequence | Behaviors only, no raw data |

| Implementation Complexity | Low (store and replay) | Medium (extraction pipeline) | High (learning from outcomes) |

| Best Use Case | Short sessions, debugging replays | Returning users, support agents | Complex multi-step workflows |

| Breaks At | 10+ past sessions (90K tokens) | Stale or conflicting facts | Noisy reward signals |

Most agents in production today are stateless. Every session starts from zero. The agent has no idea what happened yesterday, what the user prefers, or what failed last time. This is fine for simple tasks. Ask a question, get an answer. But the moment you want an agent to do anything that requires continuity, you are in trouble.

I wrote about this problem in detail when I covered MemPalace, and the core issue has not changed. Stateless agents force users to repeat themselves. They force developers to stuff everything into system prompts that grow longer and longer. And they create a ceiling on what agents can actually do.

Think about what a human support agent knows after three months on the job. They know that customer X always has PostgreSQL issues on Tuesdays after their backup job runs. They know that the "connection timeout" error usually means the customer forgot to whitelist the new IP range. They know that when someone says "it is slow," they should check the query planner first, not the network. None of that knowledge came from a manual. It came from experience.

Stateless agents cannot have experience. Every session is their first day on the job.

The cost is measurable. I tracked it across three internal agents over two months. Users repeated the same context setup an average of 3.2 times per week. Support resolution time was 40% longer than it needed to be because agents kept asking questions the user had already answered in previous sessions. And the agents kept suggesting solutions that had already been tried and rejected.

That is the tax you pay for statelessness. Not a catastrophic failure. A slow, persistent drag on everything the agent does.

Key takeaway: Stateless agents force users to repeat context an average of 3.2 times per week, and resolution times run 40% longer than they need to be.

What Is Episodic Memory?#

Episodic memory is the simplest form of agent memory, and the one most teams implement first. It is a full replay of what happened. Every user message, every agent response, every tool invocation, stored and replayed into the context window.

The analogy I keep coming back to is a tape recorder. You press record at the start of the conversation, and you press play the next time the agent needs to remember. The agent gets the raw material of what happened, in order, with full fidelity.

In cognitive science, episodic memory is the system that lets you remember specific events. Not facts in the abstract, but the experience itself. You remember the meeting where the client got frustrated. You remember the debugging session where you found the race condition at 2 AM. The memory is timestamped, sequential, and rich with context.

For agents, episodic memory typically looks like this:

interface EpisodicMemory {

sessionId: string

timestamp: Date

messages: ConversationMessage[]

toolCalls: ToolInvocation[]

metadata: {

userId: string

topic: string

resolution: string | null

}

}

You store the full conversation history, indexed by session and user. When the agent starts a new session, you retrieve relevant past sessions and inject them into the context.

The implementation is straightforward. Store conversations in a database. When a new session starts, query for recent sessions with the same user or similar topics. Prepend them to the system prompt or inject them as context. The agent now "remembers" what happened before.

This pattern works remarkably well for short histories. If you have three or four past sessions to reference, each with ten to fifteen messages, the agent can maintain continuity without breaking a sweat. It feels like talking to someone who actually remembers you.

The appeal is obvious. No information is lost. The agent has access to the exact words used, the exact sequence of events, the exact tools that were called. If a user says "do what you did last time," the agent can look at the tape and replay the steps.

Key takeaway: Episodic memory is the simplest to implement and preserves full fidelity, but it grows linearly with every interaction and cannot scale beyond a handful of past sessions.

When Does Episodic Memory Break?#

Here is the problem. Context windows are finite. And episodic memory grows linearly with every interaction.

I ran the numbers on a real support agent workload. Average message length was about 150 tokens for user messages, 400 tokens for agent responses (including tool call descriptions). With metadata overhead, each conversational turn consumed roughly 600 tokens. A typical session was 15 turns, so about 9,000 tokens per session.

Now scale that. A returning customer with 10 past sessions is carrying 90,000 tokens of episodic memory. On Claude with a 200K context window, that is nearly half the available context consumed before the current conversation even starts. And that is a moderate user. Power users with 30 or 40 past sessions would blow past any context limit.

The failure mode is not dramatic. The agent does not crash. It degrades. Around message 40 in my testing, with a full context window, three things happen consistently:

First, the model starts conflating details from different sessions. A database name from session 7 gets attributed to the problem described in session 15. The agent is not lying. It is losing the ability to distinguish between similar memories packed tightly together.

Second, early context gets effectively ignored. This is the well-documented "lost in the middle" phenomenon (Liu et al.). Information at the start and end of the context window gets more attention than information in the middle. Your most important historical context, the first few sessions that established the relationship, ends up in the dead zone.

Third, latency increases. More input tokens means more processing time and higher costs. A request that took 2 seconds with a fresh context now takes 8 seconds because the model is processing 150K tokens of history.

There are mitigation strategies, of course. Sliding windows that keep only the most recent N sessions. Summarization pipelines that compress old sessions into shorter summaries. Relevance-based retrieval that only pulls in sessions matching the current topic. But all of these involve lossy compression. You are trading fidelity for feasibility. And the decision about what to keep and what to discard is itself a hard problem.

The fundamental limitation is this: episodic memory tries to preserve everything, and context windows cannot hold everything. Something has to give.

Key takeaway: Episodic memory hits a hard wall around 90K tokens at message 40, where the model begins conflating sessions and ignoring middle context.

How Does Semantic Memory Reduce Token Costs?#

Semantic memory takes a completely different approach. Instead of storing what happened, it stores what is true.

In cognitive science, semantic memory is your knowledge of facts, concepts, and relationships. You know that Paris is the capital of France. You know that PostgreSQL supports JSONB columns. You know that your colleague prefers Slack over email. These are not memories of specific events. They are extracted facts that exist independent of when or how you learned them.

For agents, semantic memory means maintaining a structured knowledge layer. Instead of replaying a conversation where the user mentioned their database, you store a fact:

{

"entity": "customer_acme_corp",

"facts": {

"database": "PostgreSQL 15.3",

"billing_plan": "Enterprise",

"deployment": "AWS us-east-1",

"primary_contact": "Jordan",

"known_issues": ["connection pooling under load", "backup job conflicts"],

"preferred_communication": "technical, no hand-holding"

},

"last_updated": "2026-04-18T14:30:00Z"

}

This is a filing cabinet. Facts organized by entity, categorized, dated, and retrievable without replaying any conversation. The agent does not need to know that you mentioned PostgreSQL 15 in message 7 of session 3. It just needs to know that you use PostgreSQL 15.

The compression ratio is dramatic. A 9,000-token conversation might yield 200 tokens of semantic facts. Ten sessions worth of episodic memory (90,000 tokens) might compress down to 500 tokens of structured knowledge. That is a 180x reduction.

But the value is not just compression. Semantic memory enables a fundamentally different kind of agent behavior. Instead of sifting through conversation logs to find relevant details, the agent starts every session with a crisp understanding of who it is talking to and what matters. The agent can say, "I see you are on PostgreSQL 15 running on AWS us-east-1. Is this related to the connection pooling issue we have been tracking?" That is not retrieval. That is knowledge.

I explored this concept when I wrote about the LLM Wiki pattern for personal knowledge bases. The idea of extracting structured knowledge from unstructured interactions is the same principle, applied at the agent level instead of the personal level.

Key takeaway: Semantic memory trades conversational nuance for a 40-60% token reduction, turning 90K tokens of history into 500 tokens of structured facts.

How to Implement Semantic Memory#

Building semantic memory requires solving two problems: extraction and organization.

Extraction is the process of pulling facts out of conversations. You can do this with a dedicated extraction pass after each session, using the LLM itself as the extractor. The prompt looks something like:

Given this conversation, extract all factual information about the user,

their environment, preferences, and any technical details mentioned.

Return structured JSON with entity-attribute-value triples.

Do not include opinions, speculation, or transient details.

The key word there is "transient." Not everything in a conversation is worth remembering. The user saying "hold on, let me check" is episodic noise. The user saying "we migrated to Kubernetes last month" is a semantic fact. Teaching the extraction pipeline to distinguish between the two is where the real work happens.

Organization determines how extracted facts are stored and queried. There are three common patterns:

Key-value stores are the simplest. Each entity (user, project, system) has a set of key-value pairs. Easy to implement, easy to query, but limited in expressing relationships between entities.

Knowledge graphs are the most expressive. Entities are nodes, relationships are edges. You can represent that "Acme Corp uses PostgreSQL," "PostgreSQL runs on AWS," and "AWS us-east-1 has a known latency issue" as connected facts. Graph queries let you traverse these relationships to answer questions the individual facts could not answer alone.

interface KnowledgeTriple {

subject: string // "acme_corp"

predicate: string // "uses_database"

object: string // "postgresql_15"

confidence: number // 0.95

source: string // "session_2026-04-10"

lastVerified: Date

}

Vector-indexed fact stores combine the flexibility of natural language with the precision of structured storage. Facts are stored as natural language sentences ("Acme Corp runs PostgreSQL 15.3 on AWS us-east-1") and indexed with embeddings for semantic retrieval. This approach is forgiving of messy extraction but less precise than structured representations.

The choice depends on your use case. For a support agent handling hundreds of customers, a knowledge graph gives you the richest queries. For a coding assistant that needs to remember project context, a key-value store per project is often sufficient. For a general-purpose assistant, vector-indexed facts offer the best balance of flexibility and recall.

One pattern I have found essential is fact verification. Semantic memory can go stale. The user might migrate from PostgreSQL to MySQL. If your semantic memory still says "uses PostgreSQL," the agent will give wrong answers with high confidence. Adding a lastVerified timestamp and periodically prompting the user to confirm key facts prevents this kind of drift.

Key takeaway: Semantic memory requires solving extraction (pulling facts from conversations) and organization (storing them for fast retrieval), with fact verification to prevent stale data from poisoning agent responses.

When Should You Use Procedural Memory?#

Procedural memory is the most interesting of the three, and the hardest to implement well.

In cognitive science, procedural memory is the system that stores learned skills and behaviors. You do not consciously remember how to ride a bicycle. You just do it. Your muscles know the pattern. A professional pianist does not think about each finger placement. The movements are encoded at a level below conscious recall.

For agents, procedural memory means learned behaviors. Patterns the agent has discovered through experience that change how it acts, not just what it knows. This is not about remembering facts (semantic) or events (episodic). It is about remembering what works.

Here is a concrete example. Say your agent integrates with a third-party API that occasionally rate-limits requests. The first time it hits a 429 response, it fails. The second time, maybe a developer adds retry logic to the system prompt. But with procedural memory, the agent could learn this pattern itself:

interface ProceduralRule {

trigger: string // "API returns 429 status"

learned_behavior: string // "Wait 2^attempt seconds, retry up to 3 times"

confidence: number // 0.92

success_rate: number // 0.87

times_applied: number // 143

source: "experience" | "instruction" | "fine_tuning"

}

The agent does not just know that rate limiting exists (semantic). It does not just remember the specific session where it first encountered a 429 (episodic). It has internalized a behavioral pattern. When it sees a 429, it automatically applies exponential backoff, because that is what has worked in the past.

This is fundamentally different from the other two memory types. Episodic memory says "here is what happened." Semantic memory says "here is what is true." Procedural memory says "here is what to do."

Other examples of procedural memory in practice:

- A coding agent that has learned to run tests before committing, not because a rule says to, but because skipping tests led to CI failures 73% of the time.

- A support agent that has learned to ask for the error log before suggesting solutions, because jumping straight to solutions without diagnostic data wastes an average of 4 messages.

- A data analysis agent that has learned to check for null values in the first column before running aggregations, because null values caused incorrect results in 40% of past queries.

These are not rules someone wrote. They are patterns extracted from experience. And that distinction matters because the space of useful behaviors is far too large to enumerate in advance. You cannot write a system prompt that covers every edge case. But you can build systems that learn from their own mistakes.

Key takeaway: Procedural memory encodes what to do, not what happened or what is true. It is the difference between knowing that rate limits exist and automatically applying exponential backoff when one hits.

Why Is Procedural Memory the Hardest to Build?#

Episodic memory is a storage problem. Semantic memory is an extraction problem. Procedural memory is a learning problem. And learning is hard.

The core challenge is attribution. When an agent succeeds, why did it succeed? Was it the specific approach it took, or was the problem just easy? When it fails, was it the wrong strategy, or was the environment hostile? Extracting reliable behavioral patterns from noisy outcomes requires more than simple logging.

There are three main approaches:

Pattern libraries are the most practical starting point. You manually define a set of behavioral rules and update them based on observed outcomes. This is essentially a curated playbook that evolves over time. The agent does not learn autonomously, but the engineering team learns on its behalf and encodes that learning as updated procedures.

const proceduralPatterns = [

{

name: "api_retry_backoff",

condition: (response) => response.status === 429,

action: async (attempt) => {

const delay = Math.pow(2, attempt) * 1000

await sleep(delay)

return retry()

},

stats: { applied: 143, succeeded: 124, failed: 19 }

},

{

name: "ask_for_logs_first",

condition: (message) => message.includes("error") || message.includes("not working"),

action: () => "Can you share the relevant error logs or stack trace?",

stats: { applied: 89, reduced_resolution_by: "3.2 messages avg" }

}

]

Reinforcement learning from feedback is more ambitious. The agent takes actions, receives feedback (explicit ratings, implicit success/failure signals), and adjusts its behavior accordingly. This is conceptually simple but practically complex. You need a reward signal, and most agent interactions do not have clean reward signals. Did the user leave because they were satisfied, or because they gave up?

Tool-use fine-tuning is the approach that major model providers are increasingly supporting. You collect traces of successful agent interactions, including which tools were called, in what order, with what parameters, and fine-tune the model on those traces. The procedural knowledge gets baked into the model weights rather than stored externally. This is the closest analog to how human procedural memory actually works. The knowledge becomes implicit rather than explicit.

The practical reality in 2026 is that most teams use pattern libraries with occasional fine-tuning. Pure reinforcement learning for agents is still largely a research endeavor. The feedback loops are too slow, the action spaces are too large, and the reward signals are too noisy for online RL to work reliably in production.

Key takeaway: Most production teams implement procedural memory as curated pattern libraries, not autonomous learning. The attribution problem (why did this succeed?) remains the core blocker for fully automated procedural learning.

How Do Hybrid Architectures Combine All Three?#

No single memory type is sufficient for a production agent. The real architecture question is how to combine them.

I think about this as a layered system, similar to the five pillars of agentic engineering where each layer handles a different concern:

Layer 1: Procedural memory loads first. Before the conversation even starts, the agent loads its behavioral rules. These are the muscle-memory patterns that shape how it will interact. Retry policies, diagnostic workflows, communication preferences. This layer is small (usually under 1,000 tokens) and changes slowly.

Layer 2: Semantic memory provides context. The agent loads relevant facts about the current user, project, or domain. This is the filing cabinet. "Customer uses PostgreSQL 15. Last issue was connection pooling. Billing plan is Enterprise." Again, compact. Usually 200 to 500 tokens per entity.

Layer 3: Episodic memory fills in the gaps. Only after procedural and semantic memory are loaded does the agent look at episodic memory. And crucially, it does not load everything. It uses the semantic context to determine which past sessions are relevant. If the current query is about database performance, it retrieves past sessions tagged with database topics. If it is a billing question, it retrieves billing sessions. This targeted retrieval keeps the episodic memory payload manageable.

Layer 4: The current conversation. This is the live interaction, with full fidelity, occupying whatever context remains after the first three layers.

The key insight is that each layer has a different token budget and a different update frequency:

| Layer | Token Budget | Update Frequency | Retention |

|---|---|---|---|

| Procedural | 500-1,000 | Weekly/Monthly | Permanent |

| Semantic | 200-2,000 | After each session | Until invalidated |

| Episodic | 5,000-20,000 | Real-time | Rolling window |

| Current | Remaining | Real-time | Session only |

This layered approach solves the context window problem. Instead of stuffing 90,000 tokens of raw conversation history into the context, you get 500 tokens of behavioral rules, 500 tokens of entity facts, and 10,000 tokens of carefully selected past episodes. The agent is better informed and uses fewer tokens.

The architecture also maps cleanly to the patterns I described in my post on building multi-agent systems. In a multi-agent setup, procedural memory can be shared across all agents (the team knows to retry on 429s). Semantic memory can be scoped per agent role (the database agent knows PostgreSQL details, the billing agent knows pricing plans). Episodic memory is typically per-session and shared through the orchestration layer.

Key takeaway: A hybrid architecture layers procedural rules (800 tokens), semantic facts (500 tokens), and targeted episodic retrieval (20K tokens) to use 70% fewer tokens than pure episodic replay while retaining most of the contextual richness.

Practical Implementation Guide#

Let me walk through how to actually build this. I will use a support agent as the running example, because it exercises all three memory types.

Step 1: Start with episodic memory#

Do not over-engineer. Start by storing conversations.

interface ConversationStore {

save(session: ConversationSession): Promise<void>

retrieve(query: {

userId: string

topic?: string

limit?: number

since?: Date

}): Promise<ConversationSession[]>

}

Use whatever database you have. PostgreSQL with pgvector works well. SQLite for local-first setups. The important thing is to store full sessions with metadata (userId, timestamp, topic tags, resolution status).

Step 2: Add semantic extraction#

After each session, run an extraction pass. This can be a background job that processes completed sessions.

async function extractSemanticFacts(

session: ConversationSession

): Promise<KnowledgeFact[]> {

const prompt = `

Review this support conversation and extract factual information.

Categories: technical_environment, preferences, known_issues,

contact_info, business_context.

Return JSON array of facts with entity, category, key, value,

and confidence score.

Conversation:

${formatSession(session)}

`

const facts = await llm.generate(prompt)

return mergeWithExisting(session.userId, facts)

}

The mergeWithExisting function is critical. When the extraction produces a fact that conflicts with an existing one (the user was on PostgreSQL 14, now mentions PostgreSQL 15), you need to resolve the conflict. Usually, newer information wins, but you should log the change for auditability.

Step 3: Build a procedural pattern registry#

Start with hand-written patterns based on your domain knowledge. Track their success rates over time.

class ProceduralRegistry {

private patterns: ProceduralPattern[] = []

register(pattern: ProceduralPattern): void {

this.patterns.push(pattern)

}

match(context: AgentContext): ProceduralPattern[] {

return this.patterns

.filter(p => p.condition(context))

.sort((a, b) => b.confidence - a.confidence)

}

recordOutcome(patternId: string, success: boolean): void {

const pattern = this.patterns.find(p => p.id === patternId)

if (pattern) {

pattern.stats.applied++

if (success) pattern.stats.succeeded++

pattern.confidence = pattern.stats.succeeded / pattern.stats.applied

}

}

}

Over time, you can add automated pattern discovery by analyzing sessions where the agent performed well. What did it do differently? What steps did it take that it does not take in unsuccessful sessions? This is where the learning happens.

Step 4: Wire them together with a memory router#

The memory router decides what to load into context based on the current situation.

async function buildAgentContext(

userId: string,

currentMessage: string,

maxTokens: number

): Promise<AgentContext> {

const budget = {

procedural: 800,

semantic: 1200,

episodic: Math.min(15000, maxTokens * 0.3),

reserved: maxTokens * 0.5 // for current conversation + response

}

// Layer 1: Always load procedural patterns

const procedures = proceduralRegistry.getActivePatterns()

// Layer 2: Load semantic facts for this user

const facts = await semanticStore.getFacts(userId)

// Layer 3: Retrieve relevant episodes

const relevantSessions = await episodicStore.retrieve({

userId,

topic: await classifyTopic(currentMessage),

limit: 5,

since: new Date(Date.now() - 30 * 24 * 60 * 60 * 1000) // 30 days

})

return {

systemPrompt: formatProcedural(procedures, budget.procedural),

userContext: formatSemantic(facts, budget.semantic),

history: formatEpisodic(relevantSessions, budget.episodic),

currentMessage

}

}

Notice the token budget allocation. Procedural memory gets 800 tokens because it is compact and high-value. Semantic memory gets 1,200 because per-user facts vary in volume. Episodic memory gets up to 30% of total context because historical sessions can be large but should not dominate. And 50% is reserved for the actual conversation and the model's response.

Step 5: Add feedback loops#

The final piece is closing the loop. After each session, update all three memory layers:

- Episodic: Store the new session.

- Semantic: Extract and merge new facts.

- Procedural: Record outcome data for any patterns that were applied.

This creates a virtuous cycle. Each interaction makes the agent a little smarter, a little more attuned to the user, and a little more efficient with its token budget.

What Does Each Memory Architecture Cost in Tokens?#

Token usage directly translates to cost and latency, so the architecture choice has real financial implications. The headline number: pure episodic replay costs $30/day versus $3/day for pure semantic, with a hybrid approach landing at $9/day. Let me run through the numbers.

Scenario: A support agent handling 100 sessions per day, average 15 turns per session, serving repeat customers.

Pure Episodic (Replay Everything)#

For a returning customer with 10 past sessions:

- Per-session episodic load: ~90,000 tokens

- Current session: ~9,000 tokens

- Total input per request: ~99,000 tokens

- At Claude's pricing ($3/M input tokens): $0.30 per session

- Daily cost for 100 sessions: $30.00

And that is just input tokens. Output tokens, tool calls, and the retrieval pipeline add to the bill. (For comparison, see OpenAI's pricing for similar per-token cost structures.) Worse, as customers accumulate more history, the cost grows linearly. After 30 sessions, that per-session input cost triples.

Pure Semantic (Facts Only)#

- Semantic context per user: ~500 tokens

- Current session: ~9,000 tokens

- Total input per request: ~9,500 tokens

- At Claude's pricing: $0.03 per session

- Daily cost for 100 sessions: $3.00

A 10x reduction. But you lose the nuance. The agent knows what is true but not what happened. It cannot reference the specific session where a workaround was discovered. It cannot replay the diagnostic steps that led to a resolution.

Hybrid (Procedural + Semantic + Targeted Episodic)#

- Procedural rules: ~800 tokens

- Semantic context: ~500 tokens

- Targeted episodic retrieval (2-3 relevant sessions): ~20,000 tokens

- Current session: ~9,000 tokens

- Total input per request: ~30,300 tokens

- At Claude's pricing: $0.09 per session

- Daily cost for 100 sessions: $9.00

The hybrid approach costs 70% less than pure episodic while retaining most of the contextual richness. The targeted episodic retrieval means you are only paying for history that is relevant to the current conversation, not every session the user has ever had.

Hidden costs to consider#

Token costs are the obvious metric, but there are others:

Extraction pipeline costs: Semantic memory requires an extraction pass after each session. If you use an LLM for extraction, that is an additional 10,000-15,000 tokens per session. At $0.03-0.05 per extraction, this adds $3-5 per day for 100 sessions. Still cheaper than the $21 per day saved by not replaying full episodic history.

Storage costs: Episodic memory requires storing full conversations. At scale, this is gigabytes of text per month. Semantic memory is orders of magnitude smaller. A knowledge graph with 10,000 entities and 50,000 facts fits comfortably in a few megabytes.

Latency: More input tokens means more time-to-first-token. A 99,000-token prompt takes noticeably longer to process than a 30,000-token prompt. For a support agent where response time matters, this difference is felt directly by users.

Accuracy: This is the counterintuitive one. More context does not always mean better answers. The "lost in the middle" problem means that a focused 30,000-token context with high-signal information often produces better responses than a 99,000-token context packed with everything including noise from irrelevant sessions.

How Do You Decide Which Memory Architecture to Use?#

Not every agent needs all three memory types. Here is a rough decision framework.

Use episodic memory alone when sessions are short, users rarely return, or the domain is narrow enough that full replay fits comfortably in the context window. A one-off coding assistant, a simple Q&A bot, a single-use form-filling agent. These do not need the complexity of semantic extraction or procedural learning.

Add semantic memory when you have returning users, when user-specific context matters, or when the volume of past interactions would overflow the context window. Customer support, account management, personal assistants, project management tools. Anywhere the agent needs to know who it is talking to without re-reading every past conversation.

Add procedural memory when the agent performs complex, multi-step tasks where the approach matters as much as the facts. Coding agents, diagnostic agents, workflow automation agents. Anywhere the agent can learn from past successes and failures to improve its behavior over time.

Use the full hybrid when you are building a long-lived agent that serves repeat users on complex tasks. This is the support agent, the engineering copilot, the research assistant that grows with you over months and years.

Where to Start#

If your agent has no memory today, do not try to build all three layers at once. Start with the smallest useful layer: semantic memory.

Add a key-value store for user preferences and environment facts. Store five to ten attributes per user. Inject them into the system prompt at the start of every session. That is your first semantic memory layer, and it will immediately eliminate the "tell me about your setup again" problem that frustrates returning users.

Episodic retrieval can wait until you have enough conversation history to make it worthwhile. Procedural patterns can wait until you have observed enough sessions to know which behaviors actually improve outcomes. Both layers are more valuable when built on top of a working semantic foundation.

If you have been following my writing on agentic engineering, you know that memory sits within a broader framework of context engineering, tool design, safety boundaries, and orchestration patterns. Memory is not the whole picture. But it is the layer most agents are missing entirely.

The question worth asking is not which memory type your agent needs. It is which memory type it is silently failing without.

FAQ#

What is the difference between episodic and semantic memory in AI agents?#

Episodic memory stores full records of past interactions, like a conversation log or session replay. Semantic memory stores extracted facts and knowledge, independent of when or how they were learned. Episodic memory answers "what happened?" while semantic memory answers "what is true?" In practice, episodic memory preserves nuance and sequence but uses many tokens, while semantic memory is compact but loses conversational context.

How much does agent memory cost in terms of token usage?#

It depends on the architecture. Pure episodic memory for a returning user with 10 past sessions can consume 90,000 or more input tokens per request. A hybrid approach using procedural rules, semantic facts, and targeted episodic retrieval typically uses 25,000 to 35,000 tokens. Pure semantic memory can run under 10,000 tokens. For a support agent handling 100 sessions per day, the difference between pure episodic and hybrid can be $20 or more per day in API costs.

Can I implement agent memory without fine-tuning a model?#

Yes. Episodic and semantic memory are entirely external to the model. You store conversations and facts in a database and inject them into the context window at inference time. Even procedural memory can be implemented externally using pattern libraries and rule registries. Fine-tuning is one approach to procedural memory (baking learned behaviors into model weights), but it is not the only one and not the most common in production today.

What is procedural memory for an AI agent?#

Procedural memory represents learned behaviors and skills, analogous to muscle memory in humans. Instead of remembering facts or events, the agent remembers what to do in specific situations. For example, an agent with procedural memory might automatically apply exponential backoff when it encounters a rate limit, or ask for error logs before suggesting solutions, because those approaches have succeeded in the past. It is the hardest memory type to implement because it requires learning from outcomes.

How do I handle stale or conflicting information in agent memory?#

Add a lastVerified timestamp to semantic facts and implement a conflict resolution strategy. When new information contradicts existing facts (user mentions PostgreSQL 16, but stored fact says PostgreSQL 15), the newer information should generally win, but log the change for auditing. For episodic memory, implement a sliding window that deprioritizes old sessions. For procedural memory, track success rates over time and deprecate patterns whose performance drops below a threshold.

Should I use a knowledge graph or a vector store for semantic memory?#

It depends on the complexity of relationships in your domain. Knowledge graphs excel when entities have rich, interconnected relationships (customer uses database, database runs on cloud provider, cloud provider has known issue). Vector stores work well when facts are relatively independent and you need fuzzy semantic matching. For most production systems, start with a simple key-value store per entity and upgrade to a knowledge graph only when you need relationship traversal. Vector stores are a good middle ground if you want flexible retrieval without the complexity of graph queries.

What is a hybrid memory architecture for AI agents?#

A hybrid memory architecture combines episodic, semantic, and procedural memory in a layered system. Procedural rules load first and define behavior. Semantic facts load second and provide user/domain context. Episodic memories load third, filtered for relevance to the current conversation. This approach uses tokens efficiently (typically 70% less than pure episodic replay) while maintaining the richness that comes from having multiple types of memory working together. The layered design also maps well to multi-agent systems where different agents can share procedural and semantic memory while maintaining separate episodic histories.