The week of April 21, 2026, was the week agent frameworks stopped being scarce and started being abundant. NousResearch shipped Hermes Agent. ByteDance open-sourced DeerFlow. HKUDS released Nanobot. Browser-use crossed 90,000 GitHub stars. A TypeScript multi-agent orchestration layer launched for distributed agent systems. And somewhere in my inbox, three different enterprise clients asked the same question: "Which one should we standardize on?"

The answer, based on running multi-agent pipelines across 15+ enterprise deployments, is that the question itself is wrong.

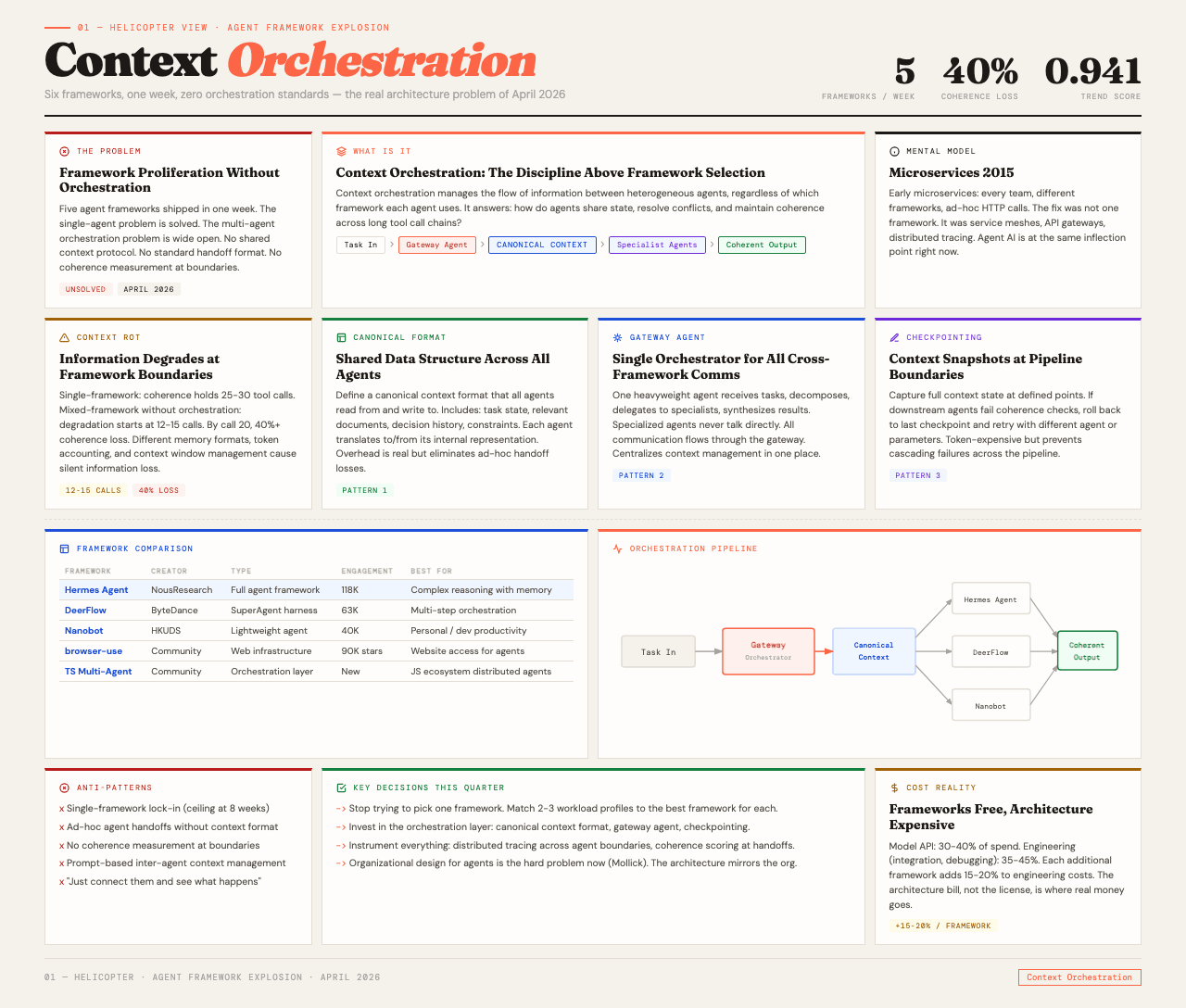

The tooling layer is commoditizing faster than anyone predicted. The cross-platform trend score for agent framework launches hit 0.941 this week, tracked across GitHub, HackerNews, and Bluesky. That number tells you something important. It tells you the industry has collectively decided that building a single-purpose agent is a solved problem. The unsolved problem, the one that will determine which enterprise AI deployments actually reach production, is what happens when you need to run multiple agent types in the same pipeline without everything falling apart.

This post is about that unsolved problem.

Table of Contents#

- The framework explosion, mapped

- Why standardizing on one framework is a trap

- Context rot across framework boundaries

- What Ethan Mollick got right

- The distributed systems analogy

- Three patterns that actually work in production

- DeerFlow and the SuperAgent harness pattern

- The real cost of framework proliferation

- What to do this quarter

- FAQ

The Framework Explosion, Mapped#

Let me lay out what actually shipped this week, because the volume alone is instructive.

Hermes Agent by NousResearch offers tool-calling and memory management out of the box. It scored 118K in engagement on GitHub. This is a full-featured agent framework designed for developers who want to build agents that remember context across sessions and call external tools reliably. It is well-engineered. It is also one of five options that launched the same week.

DeerFlow by ByteDance is something different. It is not just another agent framework. It is a SuperAgent orchestration harness, designed for multi-step, multi-tool workflows. Think of it as the layer that sits above individual agents and coordinates their work. It scored 63K in engagement, and the framing as a "harness" rather than a "framework" matters. I will come back to that.

Nanobot from HKUDS targets personal AI agent use cases with ultra-lightweight architecture. It scored 40K in engagement. If Hermes is a full kitchen, Nanobot is a really good knife. It does one thing, does it well, and weighs almost nothing.

Browser-use crossed 90K stars, making websites accessible to AI agents. This is infrastructure, not a framework per se, but it is the kind of infrastructure that makes every agent framework more capable.

And a TypeScript multi-agent orchestration layer launched for distributed agent systems, adding yet another option for teams building in the JavaScript ecosystem.

Five launches. One week. All open-source or free. The framework itself is no longer the bottleneck. If you are an enterprise team evaluating agent strategies in April 2026, the hard problem has shifted.

Why Standardizing on One Framework Is a Trap#

The instinct I see in every enterprise evaluation is to pick one framework and go all-in. It feels like the responsible architectural decision. It reduces complexity. It simplifies hiring. It makes the procurement conversation easier.

It also creates a ceiling you will hit within eight weeks.

Here is why. Real enterprise workflows are not homogeneous. A compliance pipeline might need a heavyweight agent with deep memory and multi-step reasoning. The same organization's customer service bot needs a lightweight agent with fast response times and minimal context. Their internal research tool needs web browsing capabilities that only certain frameworks handle well.

Forcing all three use cases into one framework means at least one of them is running on architecture that is wrong for its workload. I have seen this play out repeatedly. The team picks LangGraph because it handles the compliance use case well. Then the customer service bot is slow because LangGraph's graph-based orchestration adds latency that a simpler agent would not have. Or they pick a lightweight framework for the chatbot, and the compliance pipeline cannot maintain context across the 40+ tool calls it needs.

The better approach, and the one I have seen work in production, is to treat framework selection as a per-workload decision and invest in the orchestration layer that coordinates between them. This is a harder architectural problem. It is also the right one.

For more on how workload-specific decisions compound, see my post on why agentic apps cost so little to build and so much to run.

Context Rot Across Framework Boundaries#

The specific failure mode that kills multi-framework deployments is context rot at the boundaries between agents built on different frameworks.

Context rot is what happens when information degrades as it passes between agents. Agent A completes a task and produces output. That output becomes input for Agent B. But Agent B is running on a different framework with a different memory format, different token accounting, and different context window management. The handoff loses information. Not all of it. Just enough to introduce subtle errors that compound with each subsequent handoff.

I have measured this across deployments. In single-framework pipelines with good context management, coherence typically holds through 25-30 tool calls before meaningful degradation. In mixed-framework pipelines without an explicit context orchestration layer, coherence starts degrading after 12-15 tool calls. By tool call 20, you are looking at 40% or more coherence loss compared to the single-framework baseline.

That 40% number is not a benchmark. It is an average across the deployments I have instrumented. Some pipelines do better. Some do worse. But the pattern is consistent: crossing framework boundaries without explicit context management is expensive.

The problem is that each framework has its own approach to context. Hermes manages memory one way. DeerFlow manages it another way. Nanobot, being lightweight, barely manages it at all. When these agents need to collaborate, there is no shared context protocol. No standard format for "here is what I know, here is what I did, here is what matters for the next step."

I wrote about the foundations of this problem in building production-ready multi-agent systems, where the orchestrator pattern emerged as the most reliable approach. The framework explosion this week makes that pattern even more critical.

What Ethan Mollick Got Right#

Ethan Mollick observed this week that organizational design for agents is now the hard problem, not the frameworks themselves. He is right, and the framing is important.

When the tooling layer commoditizes, the differentiator moves up the stack. In cloud computing, the differentiator moved from "can we provision servers?" to "can we design distributed systems?" In mobile, it moved from "can we build an app?" to "can we build an app that people actually use?"

In agent AI, the differentiator is moving from "can we build an agent?" to "can we orchestrate ten of them without everything falling apart?" That is an organizational design problem as much as it is a technical one. It involves decisions about which agents own which responsibilities, how they communicate, how conflicts between agent outputs are resolved, and how the overall system degrades gracefully when one agent fails.

These are the same questions that distributed systems engineers have been answering for decades. The agent community is rediscovering them, often the hard way.

The Distributed Systems Analogy#

If you have built microservices at scale, the current moment in agent AI should feel familiar. The parallels are striking.

In the early days of microservices, every team built their own service with their own framework. Communication happened through ad-hoc HTTP calls. There was no shared understanding of service discovery, load balancing, circuit breaking, or distributed tracing. Things worked in demos and fell apart in production.

The solution was not to standardize on one framework. It was to build the infrastructure layer that made heterogeneous services work together: service meshes, API gateways, distributed tracing, standardized health checks.

Agent AI is at the exact same inflection point. The equivalent infrastructure for agents includes:

- A shared context protocol that agents use to pass information between them, regardless of which framework they are built on

- A distributed tracing system that lets you follow a request across multiple agents and identify where context degraded

- Circuit breakers that prevent a failing agent from poisoning the outputs of downstream agents

- Health checks that tell you not just "is this agent running?" but "is this agent producing coherent outputs?"

None of the six frameworks that launched this week provide this infrastructure layer. They each solve the single-agent problem. The multi-agent infrastructure problem is still open.

If you are interested in how observability fits into this picture, see my post on the agent observability gap. The tracing problem is real.

Three Patterns That Actually Work in Production#

Based on deployments where mixed-framework agent systems are running reliably, here are the three architectural patterns I have seen succeed.

Pattern 1: Canonical Context Format. Define a shared data structure that all agents read from and write to, regardless of their framework. This structure includes the current task state, relevant context documents, the decision history, and any constraints or guardrails. Each agent translates from this canonical format to its internal representation and back. The overhead is real but manageable, and it eliminates the context rot that comes from ad-hoc handoffs.

Pattern 2: Gateway Agent. One agent, typically built on a heavyweight framework like DeerFlow or LangGraph, acts as the orchestrator. It receives tasks, decomposes them, delegates subtasks to specialized agents, collects their outputs, and synthesizes the results. The specialized agents never talk to each other directly. All communication flows through the gateway. This is the orchestrator pattern applied to heterogeneous frameworks, and it works because it centralizes context management in one place.

Pattern 3: Context Checkpointing. At defined points in the pipeline, the system captures a full snapshot of the current context state. If downstream agents produce outputs that fail coherence checks, the system can roll back to the last checkpoint and retry with a different agent or different parameters. This is expensive in terms of token usage but dramatically reduces the cost of cascading failures.

The teams shipping reliably are using some combination of all three. The teams struggling are treating agent orchestration as "just connect them together and see what happens."

DeerFlow and the SuperAgent Harness Pattern#

ByteDance's framing of DeerFlow as a "SuperAgent orchestration harness" is worth examining because it points toward the right abstraction level.

A harness is not a framework. A framework helps you build individual agents. A harness helps you run multiple agents together. The distinction matters because it acknowledges that the single-agent problem is largely solved and the multi-agent coordination problem is where the real engineering challenge lives.

DeerFlow is designed for multi-step, multi-tool enterprise workflows. It assumes that the agents it orchestrates may be built on different underlying frameworks. It provides the coordination layer, the task decomposition, the output synthesis, and the failure handling.

This is the right level of abstraction for enterprise teams evaluating their agent strategy in April 2026. You do not need to pick one framework. You need to pick one harness.

The open question is whether DeerFlow or something like it becomes the standard harness, or whether the harness layer fragments the same way the framework layer did. History suggests fragmentation first, consolidation later. In the meantime, building your own thin orchestration layer is a reasonable hedge.

The Real Cost of Framework Proliferation#

Let me put some numbers to this. In a typical enterprise agent deployment, the cost breakdown looks roughly like this:

- Model API costs: 30-40% of total spend

- Engineering time on integration and debugging: 35-45% of total spend

- Infrastructure (compute, storage, observability): 15-25% of total spend

When you introduce a second framework, the engineering time percentage goes up by 15-20 points. When you introduce a third, it goes up by another 10-15. The model costs do not change. The infrastructure costs go up slightly. But the engineering cost of making heterogeneous agents work together is where the real money goes.

This is the architecture bill I am talking about. The frameworks are free. The engineering to make them play nicely together is not.

For teams looking at the cost lever of context window management, see how we cut 63% of LLM costs by sending less context. The same principles apply to multi-agent context management.

What to Do This Quarter#

If you are an enterprise team evaluating agent strategies right now, here is my recommendation.

First, stop trying to pick one framework. Instead, identify 2-3 workload profiles in your organization and match each to the framework that serves it best. Hermes for complex reasoning tasks that need memory. Nanobot for lightweight personal agents. DeerFlow or LangGraph for multi-step orchestration.

Second, invest in the orchestration layer. Build or adopt a canonical context format. Implement a gateway agent pattern for cross-framework communication. Add context checkpointing at critical pipeline boundaries.

Third, instrument everything. Distributed tracing across agent boundaries. Coherence scoring at each handoff point. Latency and cost tracking per agent, per task. You cannot manage what you cannot measure, and measuring multi-agent systems is harder than measuring single agents.

Fourth, read Mollick's observation again. Organizational design for agents is the hard problem. The technical architecture mirrors the organizational architecture. If your team structure does not support multi-agent orchestration, neither will your code.

The frameworks will keep launching. The tooling will keep improving. The architecture decisions you make now will determine whether you can actually use any of it in production.

FAQ#

How many agent frameworks launched in the week of April 21, 2026?#

Five significant frameworks or agent infrastructure tools launched in one week: Hermes Agent by NousResearch, DeerFlow by ByteDance, Nanobot by HKUDS, a TypeScript multi-agent orchestration layer, and browser-use crossed 90,000 GitHub stars. The cross-platform trend score for this topic reached 0.941, indicating unusually high simultaneous interest across GitHub, HackerNews, and Bluesky.

What is context rot in multi-agent systems?#

Context rot is the degradation of information as it passes between agents in a pipeline. When Agent A produces output that becomes input for Agent B, information is lost or distorted in the handoff. This compounds with each subsequent handoff. In mixed-framework pipelines without explicit context orchestration, coherence typically degrades 40% or more after 15-20 tool calls, compared to single-framework baselines with good context management.

Should enterprise teams standardize on one agent framework?#

No. Real enterprise workflows have diverse requirements that no single framework optimizes for. A compliance pipeline needs heavyweight reasoning with deep memory. A customer service bot needs lightweight, fast responses. Forcing both into one framework means at least one workload is running on the wrong architecture. The better approach is to match frameworks to workload profiles and invest in the orchestration layer that coordinates between them.

What is the SuperAgent harness pattern?#

The SuperAgent harness pattern, exemplified by ByteDance's DeerFlow, is an abstraction layer that sits above individual agent frameworks and coordinates their work. Unlike a framework, which helps you build individual agents, a harness helps you run multiple agents together, handling task decomposition, output synthesis, and failure recovery. This is the right abstraction level for enterprise teams managing multiple agent types.

How does the agent framework explosion compare to the microservices era?#

The parallels are striking. Early microservices teams built services on different frameworks with ad-hoc communication. The solution was not framework standardization but infrastructure, service meshes, API gateways, distributed tracing, and standardized health checks. Agent AI is at the same inflection point. The equivalent infrastructure includes shared context protocols, distributed tracing across agent boundaries, circuit breakers for failing agents, and coherence health checks. None of the frameworks launched this week provide this infrastructure layer.

What are the three production patterns for multi-framework agent systems?#

The three patterns that work in production are: (1) Canonical Context Format, a shared data structure all agents read from and write to regardless of framework. (2) Gateway Agent, where one orchestrator agent handles all cross-framework communication so specialized agents never talk directly to each other. (3) Context Checkpointing, capturing full context snapshots at pipeline boundaries to enable rollback when coherence checks fail. Most reliable deployments use a combination of all three.

What is the real cost of running multiple agent frameworks?#

The frameworks themselves are free and open-source. The cost is in engineering time. In typical deployments, model API costs account for 30-40% of spend, while engineering time on integration and debugging accounts for 35-45%. Adding a second framework increases engineering costs by 15-20 percentage points. Adding a third increases costs by another 10-15 points. The architecture bill, not the framework license, is where the real expense lives.