I have been running multi-agent experiments for months. Three agents on the same codebase. Different tasks. Different models. And the same problem every time: they step on each other. Merge conflicts on shared files. Context from one agent bleeding into another. Credentials leaking across sessions. No visibility into what each agent is actually doing.

Then Google quietly open-sourced Scion in early April 2026, and it addressed almost every pain point I had been working around with duct tape.

Table of Contents#

- What Is Scion

- The Problem It Solves

- The Manager-Worker Architecture

- Three Layers of Isolation

- Core Concepts: Groves, Templates, Harnesses, Runtimes

- The Five Design Principles

- How It Compares to Other Multi-Agent Tools

- What Breaks Without Orchestration

- Bounded Agency: The Real Lesson

- Getting Started

- FAQ

What Is Scion#

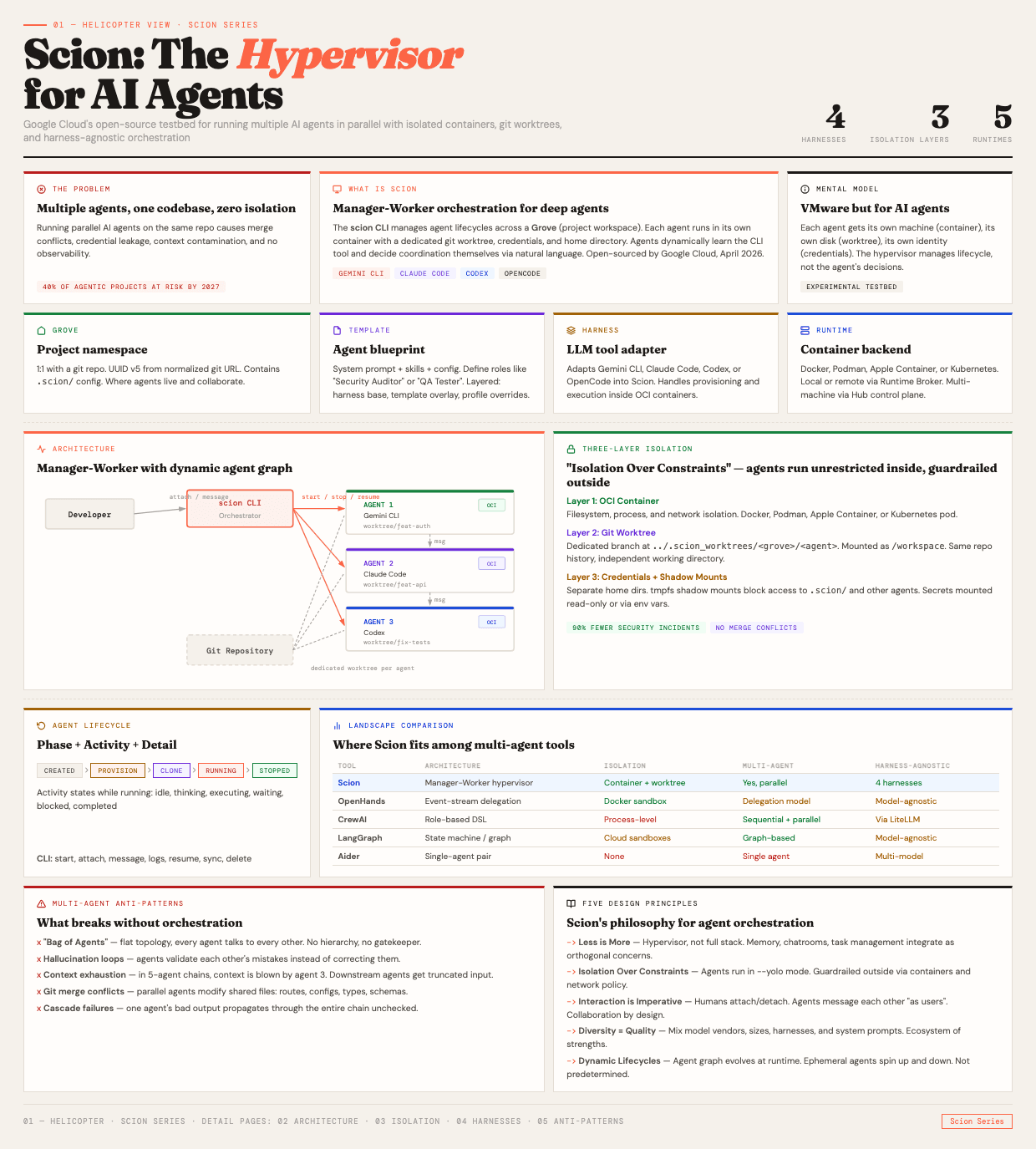

Scion is an experimental multi-agent orchestration testbed from Google Cloud. It manages concurrent LLM-based agents running in containers across your local machine, remote VMs, or Kubernetes clusters. The name comes from botany. A scion is a young shoot cut for grafting onto rootstock. The metaphor is deliberate. You graft specialized agents onto your project, each with its own role and isolation boundary.

The mental model is straightforward. Think of it as VMware but for AI coding agents. Each agent gets its own machine (container), its own disk (git worktree), and its own identity (credentials). The hypervisor manages lifecycle and coordination. It does not make decisions for the agents.

Google describes it as taking a "less is more" approach. Scion is a hypervisor, not a full orchestration stack. Memory, task management, and chatrooms are orthogonal concerns that you can integrate separately. The framework handles the hard parts of isolation, lifecycle management, and inter-agent communication. Everything else is left to the agents and the models driving them.

The Problem It Solves#

Running multiple AI agents on the same codebase is deceptively simple in theory and chaotic in practice. I have experienced every one of these failure modes firsthand, and I wrote about the management side of this in Before You Run 10 Claude Agents.

Merge conflicts. Two agents edit the same file. One rewrites a route handler while the other modifies its imports. You end up with a conflict that neither agent understands because neither saw the other's changes.

Credential leakage. Agent A has access to the production database. Agent B is supposed to be sandboxed to the test environment. Without proper isolation, a misconfigured environment variable gives Agent B production access. This is not hypothetical. I have seen it happen.

Context contamination. Agent A is working on the authentication module. Agent B is refactoring the payment system. Without isolation, files written by Agent A show up in Agent B's context window, confusing its reasoning about payment logic. And even with isolation, agents still forget everything between sessions unless you give them a proper memory layer.

Zero observability. Three agents running in parallel. Something breaks. Which agent caused it? When? What was it doing at the time? Without structured lifecycle management and logging, debugging multi-agent systems is archaeology.

Gartner's number is sobering. Over 40% of agentic AI projects risk cancellation by 2027 due to orchestration scaling complexities. Not model quality. Not prompt engineering. Orchestration.

The Manager-Worker Architecture#

Scion follows a manager-worker pattern with a key twist: the workers decide how to coordinate.

The scion CLI is the host-side manager. It orchestrates agent lifecycles but does not control agent decisions. You use it to start, stop, resume, attach to, and message agents. It manages what Scion calls a "Grove," which is the project workspace where agents live.

Agents are isolated runtime containers running the actual LLM harness software. Claude Code, Gemini CLI, OpenAI Codex, or OpenCode. Each agent gets its own container, its own git worktree branch, and its own credentials.

The interesting design choice is how agents learn to coordinate. Rather than prescribing rigid orchestration patterns, Scion exposes a CLI tool that agents dynamically discover by running scion --help. The models themselves decide how to use it. One agent might broadcast a message to all others when it finishes a task. Another might send a direct message to a specific agent requesting a code review. The coordination pattern emerges from the agents, not from the framework.

This is a deliberate philosophical choice. Google's position is that the graph of an agent swarm is dynamic and not practical to determine in advance. Agents range from specialized long-lived processes to highly ephemeral single-task workers. The mix changes dynamically. A rigid orchestration graph cannot accommodate this, so Scion does not try.

Here is what a typical workflow looks like:

# Initialize Scion in your project

scion init

# Start three agents with different roles

scion start auth "Implement OAuth2 login with Google" --attach

scion start api "Build the REST API for task management"

scion start tests "Write integration tests for the auth and API modules"

# Check on all agents

scion list

# Send a message to a specific agent

scion message tests "The auth module is ready for testing"

# View agent logs

scion logs api

# Sync changes from an agent's worktree

scion sync from auth

Each agent runs in its own tmux session inside its container. You can attach for human-in-the-loop interaction, detach to let it work autonomously, and enqueue messages while detached.

Three Layers of Isolation#

Scion's isolation model has three distinct layers, and understanding them explains why the system works where ad-hoc multi-agent setups fail.

Layer 1: OCI Containers#

Each agent runs inside its own OCI-compliant container. Docker, Podman, Apple Container (native macOS virtualization), or a Kubernetes pod. This provides filesystem, process, and network isolation at the infrastructure level.

The container is the outer boundary. An agent cannot see other agents' processes, cannot access other agents' filesystems, and can be subject to network policy that restricts what it can reach. This is the same isolation model that the industry uses for production workloads, applied to AI agents.

Layer 2: Git Worktrees#

Each agent gets a dedicated git worktree created at ../.scion_worktrees/<grove>/<agent> with its own branch. This worktree is mounted into the container as /workspace. All agents share the same repository history but have completely independent working directories.

This is the layer that prevents merge conflicts during parallel work. Agent A can rewrite the authentication module on its feat-auth branch while Agent B restructures the API on its feat-api branch. The changes only collide when you explicitly merge them, at which point you can resolve conflicts with full context about what each agent was trying to accomplish.

Layer 3: Credentials and Shadow Mounts#

Each agent has a dedicated home directory on the host, mounted into its container. Shadow mounts using tmpfs prevent agents from accessing the .scion/ configuration directory or any other agent's workspace. Sensitive credentials are mounted read-only or injected via environment variables.

This is the layer that prevents credential leakage. Agent A's Anthropic API key is not visible to Agent B. Agent B's production database connection string is not visible to Agent A. The scion CLI manages credential projection into each container separately.

Scion's philosophy is "Isolation Over Constraints." Agents run in what Google calls --yolo mode inside their containers. They have full autonomy within their boundaries. But those boundaries are enforced at the infrastructure level, outside the agent's context. The agent does not need to know it is constrained. It just cannot escape its container.

Research suggests that sandboxed agents reduce security incidents by approximately 90% compared to agents running with unrestricted host access. The overhead is real but manageable. Container startup adds seconds, not minutes. And the alternative, an agent with production credentials modifying shared files, is categorically worse.

Core Concepts: Groves, Templates, Harnesses, Runtimes#

Scion introduces four abstractions that work together. Each one addresses a specific concern in multi-agent orchestration.

Groves#

A Grove is a project namespace. It corresponds to a .scion/ directory on the filesystem and is typically 1:1 with a git repository. Each Grove has a deterministic UUID v5 identifier derived from the normalized git remote URL. Groves can exist at the project level or globally for cross-project agents.

Templates#

A Template is a blueprint for creating agents. It defines the system prompt, the skills available to the agent, and the base configuration. You might have a "Security Auditor" template with a system prompt focused on vulnerability scanning and a "QA Tester" template focused on test generation.

Templates are harness-agnostic. The same role definition works whether the underlying agent is Claude Code, Gemini CLI, or Codex. Templates are layered: harness-config base, then template overlay, then profile overrides. This means you can have a generic "reviewer" template that works with any harness and a Claude-specific configuration that adds Claude Code skills on top.

Harnesses#

A Harness adapts a specific LLM tool into Scion. It handles provisioning, configuration, and execution inside the OCI container. If the harness concept sounds familiar, it is the same idea behind OpenAI's Harness Engineering approach, applied at the container level rather than the repository level. Scion currently supports four harnesses:

| Harness | Resume | Hooks | OpenTelemetry | System Prompt Override |

|---|---|---|---|---|

| Gemini CLI | Yes | Yes | Yes | Yes |

| Claude Code | Yes | Yes | Yes | Yes |

| OpenCode | Yes | No | No | No |

| Codex | Yes | No | Yes | No |

Gemini CLI and Claude Code are the most fully supported, with hooks for lifecycle events and OpenTelemetry for observability. The harness system is extensible via a plugin architecture built on hashicorp/go-plugin communicating over gRPC.

Runtimes#

A Runtime is the container backend that actually executes agents. Scion supports Docker, Podman, Apple Container (using the native macOS Virtualization Framework), and Kubernetes. You can run agents locally during development and on Kubernetes in production.

For multi-machine orchestration, Scion introduces two additional concepts. A Hub is a central control plane that manages identity, state persistence, and collaboration. A Runtime Broker is a machine (laptop or VM) that registers with a Hub to provide execution capacity. This allows you to distribute agent workloads across multiple machines.

The Five Design Principles#

Scion's design principles are worth examining because they reflect hard-won lessons about what works and what does not in multi-agent systems.

1. Less is More. Scion is a hypervisor, not a full stack. It manages agent lifecycle, isolation, and communication. Memory systems, task management, chatrooms, and other concerns are orthogonal and integrate separately. This prevents the framework from becoming an opinionated monolith that conflicts with how you actually want your agents to work.

2. Isolation Over Constraints. Give agents full autonomy inside their boundaries. Do not try to constrain the agent at the prompt level. Constrain it at the infrastructure level. An agent that does not know it is sandboxed will use its full capability within the sandbox. An agent told "do not access production" in its system prompt might still do it.

3. Interaction is Imperative. Larger complex projects need collaboration. Scion allows humans to attach directly to agent sessions and provides mechanisms for agents to message each other "as users." This is not a fully autonomous system. It is a collaborative system with human oversight built in.

4. Diversity Results in Higher Quality. Different model vendors, model sizes, harnesses, and system prompts bring different strengths. A Gemini agent reviewing code written by a Claude agent catches different issues than Claude reviewing its own code. Scion is designed to make this mix practical rather than painful.

5. Agent Lifecycles are Dynamic. The topology of an agent swarm changes at runtime. Some agents are long-lived specialists. Others are ephemeral workers that spin up for a single task and shut down. The framework accommodates both without requiring you to predetermine the graph.

How It Compares to Other Multi-Agent Tools#

The multi-agent orchestration space has grown rapidly. Here is where Scion fits relative to the major alternatives as of April 2026.

| Tool | Architecture | Isolation | Multi-Agent | Harness-Agnostic |

|---|---|---|---|---|

| Scion | Manager-Worker hypervisor | Container + worktree | Parallel, dynamic graph | 4 harnesses |

| OpenHands | Event-stream delegation | Docker sandbox | Delegation model | Model-agnostic |

| CrewAI | Role-based DSL | Process-level | Sequential + parallel | Via LiteLLM |

| LangGraph | State machine / graph | Cloud sandboxes | Graph-based | Model-agnostic |

| AutoGen | Conversational multi-agent | Process-level | Group chat pattern | OpenAI-centric |

| Aider | Single-agent pair programmer | None | Single agent only | Multi-model |

Scion's unique position is the combination of true container isolation and harness agnosticism. Most multi-agent frameworks either provide no real isolation (CrewAI, AutoGen, Aider) or are tied to a specific LLM ecosystem (OpenHands with its own agent, LangGraph with LangChain). Scion lets you run a Claude Code agent alongside a Gemini agent alongside a Codex agent, each in its own isolated container, coordinating through a shared protocol.

The trade-off is maturity. Scion is experimental. OpenHands has 70,000+ GitHub stars and a production-grade SDK. LangGraph has LangSmith observability and checkpointing built in. CrewAI can be set up in 20 lines of code. Scion requires Docker, Go installation, and comfort with containerized workflows. It is a testbed, not a production-ready framework. Google is explicit about this.

What Breaks Without Orchestration#

Multi-agent systems fail in predictable ways. Understanding these failure modes is more valuable than understanding any specific tool.

The "Bag of Agents" anti-pattern. Flat topology. Every agent can talk to every other agent. No hierarchy. No gatekeeper. The result is chaos. Agents repeat work, contradict each other, and waste tokens on coordination overhead that exceeds the value of parallelism.

Hallucination loops. Agent A generates a claim. Agent B validates it without independent verification. Agent A sees the validation and reinforces the claim. The error compounds through the chain. In one experiment, researchers found that three agents echoing each other could sustain a completely fabricated technical claim through five rounds of "peer review."

Context window exhaustion. In a five-agent chain, context is typically blown by agent three. Later agents operate on truncated input, missing critical context from earlier in the chain. The mitigation is to have each agent produce a concise summary alongside its full output. Downstream agents get summaries by default and can request full output when needed.

Git merge conflicts on shared files. This is the most common practical failure. Two agents working in parallel will frequently collide on route definitions, configuration files, barrel exports, type definitions, and database schemas. Scion's worktree isolation addresses this by keeping changes on separate branches, but you still need to merge eventually. The best practice is additive-only changes where possible and early merging.

Cascade failures. One agent produces bad output. The next agent in the chain uses it as input. The error propagates and amplifies through every subsequent agent. Independent quality gates between agents are essential. Each handoff point should verify that the input meets minimum quality thresholds before processing.

The industry numbers are stark. Over 40% of agentic AI projects risk cancellation by 2027 due to scaling complexities from orchestration, according to multiple research reports. The model is not the bottleneck. The orchestration is. The 9 Agentic LLM Workflow Patterns post covers the architectural patterns that help you get orchestration right before scaling to multiple agents.

Bounded Agency: The Real Lesson#

Matthew Skelton made a point at QCon London in March 2026 that I keep coming back to. 80% of firms report no tangible benefit from AI adoption. His argument is that the problem is not the technology. It is that organizations have never learned to delegate agency with guardrails.

This maps directly to what Scion is doing at the infrastructure level. Define what the agent can access. Define what the agent cannot do. Enforce those boundaries outside the agent's context. Then give the agent full autonomy within those boundaries.

The teams that ship agentic systems successfully are the ones that define what the agent cannot do before they define what it can do. Negative constraints compound. Positive capabilities are table stakes. The Five Pillars of Agentic Engineering covers this same principle from the codebase and validation side. Scion applies it at the infrastructure level.

Three questions to answer before any agent deployment:

- What data can this agent never access?

- What actions require human approval regardless of confidence?

- What is the blast radius if this agent is completely wrong?

If you cannot answer all three in one sentence each, you are not ready to deploy.

Getting Started#

Scion requires Go and a container runtime (Docker, Podman, or Apple Container on macOS).

# Install Scion

go install github.com/GoogleCloudPlatform/scion/cmd/scion@latest

# Initialize machine-wide config

scion init --machine

# Initialize a project

cd your-project

scion init

# Start your first agent

scion start debug "Help me debug this error" --attach

The official documentation covers profiles, runtimes, templates, and the Hub architecture. The demo game "Relics of Athenaeum" is worth running. It demonstrates multi-agent coordination defined entirely in markdown, with agents collaborating to solve puzzles through group and direct messaging.

Scion is experimental. Local mode is relatively stable. Hub-based workflows are roughly 80% verified. The Kubernetes runtime has rough edges. Use it as a testbed to explore multi-agent patterns, not as production infrastructure. That is its stated purpose and that is where it delivers value today.

For the production side of multi-agent architecture, including state management and error handling patterns that complement what Scion provides at the infrastructure level, see Building Production-Ready Multi-Agent Systems.

FAQ#

Is Scion a production-ready orchestration framework?#

No. Google is explicit that Scion is an experimental testbed. Local mode is stable enough for daily development work, but the Hub and Kubernetes features are still being hardened. Use it for exploring multi-agent patterns and building intuition about isolation and coordination. For production multi-agent systems, you would want the isolation principles Scion demonstrates but built into your own infrastructure.

Can I mix different LLM providers in the same project?#

Yes. This is one of Scion's key differentiators. You can run a Claude Code agent for writing code, a Gemini CLI agent for code review, and a Codex agent for testing, all in the same Grove, each in its own container. The template system is harness-agnostic, so the same role definition adapts to different underlying agents.

How does Scion handle merge conflicts between agents?#

Each agent works on its own git worktree branch. Changes do not collide during parallel work. When you are ready to integrate changes, you use scion sync to pull changes from an agent's worktree into the main branch. Merge conflicts are resolved at this point, with full context about what each agent was doing. The best practice is to assign agents tasks that touch different files and to merge early and often.

What is the overhead of running agents in containers?#

Container startup adds a few seconds per agent. Memory overhead depends on the harness. Claude Code and Gemini CLI each consume roughly 200 to 500 MB of RAM per container, depending on context size. For a three-agent setup, expect 1 to 2 GB of additional memory usage. The trade-off is worth it for the isolation guarantees.

How is this different from just running multiple terminal windows?#

Three critical differences. First, isolation. Multiple terminals share the same filesystem, credentials, and environment variables. Scion containers do not. Second, lifecycle management. Scion tracks agent state, supports resume, and provides structured logging. Multiple terminals give you no visibility. Third, coordination. Scion provides a messaging system for inter-agent communication. Multiple terminals require manual copy-paste.

Do I need Kubernetes to use Scion?#

No. Scion works with Docker or Podman on any machine. Apple Container works on macOS. Kubernetes is an additional runtime option for distributed agent workloads across multiple machines. Most users will start and stay with local Docker.

Can agents spawn other agents?#

Yes. Because templates can contain full environment definitions, an agent can use the scion CLI to start sub-agents for specific tasks. A "lead developer" agent could start a "security reviewer" agent to audit its code, wait for the review, and act on the feedback. The agent graph evolves dynamically at runtime.