Last week I watched a group chat of enterprise architects unravel in real time.

One of them had spent the last fourteen months leading an internal platform team building a proprietary agent orchestrator. Custom planner. Custom tool loop. Custom memory store. Custom trace viewer. The kind of thing that gets a slide at the quarterly review and a line in the board deck under "AI capability build out." They were weeks away from a v1 release.

Then GitHub shipped the Copilot SDK and the Agent HQ surface into public preview. MIT license. Five languages. Bring your own model. Built by the same team that ships Copilot for twenty million developers. Around eight thousand stars on the first weekend.

The architect typed three words into the chat and went quiet.

What happened to his team is going to happen to a lot of teams this year, and I want to write about it honestly, because the instinct when something like this lands is to argue that your work was still necessary. That framing misses the point. The point is that the thing you thought was your moat was never actually your moat. It was a commodity you mistook for a differentiator because nobody else had commoditized it yet. Last week somebody did.

If you are running an AI platform team inside an enterprise, or a Global Capability Center, or any org that has been paying humans to reinvent LangGraph for the last year, this post is for you.

Table of Contents#

- What actually happened last week

- The two components of an agent system

- Why the harness went commodity faster than anyone expected

- The enterprise agent tax, stated plainly

- What Walmart got right that most of us got wrong

- Rent the runtime, own the workflow

- The budget table that should be on your desk Monday morning

- Steelman for the people still building their own

- What to do this week if you are stuck in the wrong layer

- FAQ

What actually happened last week#

The headline event was the Copilot SDK going into public preview. But the SDK is not the interesting part on its own. The interesting part is the full stack that is now available to any team that wants it, at zero license cost, backed by a vendor that is not going anywhere.

The orchestration layer is covered by LangGraph, Mastra, Dify, CrewAI, Pydantic AI, the Microsoft Agent Framework, and now Copilot SDK. Several of these have tens of thousands of stars and venture money measured in the hundreds of millions. The memory layer is covered by Mem0, Zep, Letta, Cognee, and a long tail of pgvector and Redis recipes. The tool layer has converged on Model Context Protocol, which every major vendor now supports. The trace layer is Langfuse, Phoenix, and the OpenTelemetry GenAI spec that landed in the last six months.

None of these existed as a coherent stack eighteen months ago. If you started a proprietary framework project in early 2025, the decision was defensible. You were filling a gap. The gap is gone now. Somebody filled it while you were in standups.

The question is what you do next, not whether the event happened.

The two components of an agent system#

When I talk to enterprise architects about this, I find it helps to split an agent system into exactly two components, because the split clarifies what is commodity and what is not.

The first component is the harness. The harness is everything that is true about every agent system regardless of what the agent does. It is the planner loop. The tool calling surface. The token accounting. The retry logic. The context compaction strategy. The memory interface. The trace and replay machinery. The sandboxing model. These things have nothing to do with whether you are building a claims adjudication agent or a customer support copilot. They are the scaffolding that sits underneath any agent that ships.

The second component is the workflow. The workflow is the part that is specific to your business. It is the state machine that models your claims process. It is the policy around what the agent is allowed to say to a regulated user. It is the escalation rules. It is the integration into your system of record. It is the evaluation suite that reflects your actual quality bar. It is the feedback loop from your real users. The workflow is where the differentiation lives, because no two enterprises have the same workflow even when they are in the same industry.

Every agent system is some combination of these two components. The question is how much of your engineering budget is going into each one.

If you were paying attention in 2025, you were probably spending sixty to eighty percent of your agent platform budget on the harness. That made sense when the harness was genuinely hard and nobody had open sourced a complete solution. It does not make sense now. It made sense for exactly the same reason it made sense to build your own database in 1995 and stopped making sense by 2005. The layer commoditized and the smart money moved up the stack.

I wrote about the agent-side version of this earlier in the year in OpenAI's Harness Engineering Explained. The piece focused on the small-team angle. The principle is the same at enterprise scale. The harness is scaffolding. Scaffolding is not a moat.

Why the harness went commodity faster than anyone expected#

There is a specific reason this happened on the timeline it did, and it is worth naming.

The agent harness problem turns out to be a classic instance of a solved-once-then-cloned problem. Once somebody figured out the right abstractions for planner plus tool loop plus memory plus traces, those abstractions were easy to copy. LangGraph crystallized a lot of the hard thinking in mid-2024. Every subsequent framework essentially agreed on the same five or six primitives with different names and different ergonomics. The academic work on ReAct, Reflexion, and tool-augmented reasoning was already public. The engineering work to turn those papers into production code was nontrivial but not actually proprietary.

Compare this to something like a distributed database. A distributed database has decades of hard tradeoffs embedded in it. Two engineers can look at the same whitepaper and still spend three years each building incompatible implementations. Agent harnesses are not like that. The design space is smaller than it looks. Once three or four well-funded teams converge on the shape of the right answer, the rest is execution, and execution is what open source is for.

The second reason is that the hyperscalers and foundation model vendors are structurally motivated to make the harness free. OpenAI does not make money from you paying for your orchestration layer. It makes money from you burning tokens. Every layer on top of the model that charges you money is a layer that reduces the tokens you can afford to burn. This is why the SDKs from Anthropic, OpenAI, and Google are all better than they were a year ago, and why the Microsoft Agent Framework consolidated AutoGen and Semantic Kernel in the fall and open sourced the result. The vendors want the harness to be free. They got their wish.

The third reason is GitHub specifically. Any time GitHub ships a developer tool at public preview, a bunch of adjacent proprietary projects die within ninety days, because GitHub has twenty million developers of distribution and most other tools do not. This is how Actions killed a generation of CI vendors. It is how Codespaces pressured a generation of cloud IDE startups. It is how Copilot ended the conversation about whether AI would write code. The Copilot SDK is the same playbook. I do not expect every proprietary agent framework to die, but I do expect the conversation in enterprise buying committees to change in the next quarter.

The enterprise agent tax, stated plainly#

Let me put a number on the thing that is actually happening.

There are roughly fifteen hundred Global Capability Centers in India alone. There are several hundred more in other locations. A meaningful percentage of those, call it a quarter to a third, have been running some flavor of internal agent platform work since late 2024. The team size on these projects ranges from six to thirty engineers. The median is around twelve. The median fully loaded cost per engineer in a GCC is somewhere between seventy and a hundred and fifty thousand dollars a year depending on location and seniority. Multiply that out and you land at something uncomfortable.

If I had to put a single number on the aggregate spend that is currently going into reinventing the harness layer across enterprise captives, I would call it a billion dollars a year, and I think that is a conservative estimate. You can quibble with my multipliers. The order of magnitude is defensible. The exact shape of the waste is not.

I have been calling this the agent tax in private conversations for a few months. It is the tax enterprises pay when they build things that are already commodities because the procurement process cannot distinguish between a commodity and a capability. The person who greenlit the proprietary orchestrator in January 2025 was not wrong at the time. The person still building it in April 2026 is paying the tax with every sprint they complete.

The good news about taxes is that once you know you are paying one, you can usually stop paying it.

What Walmart got right that most of us got wrong#

The reason I am bullish on enterprise agent work despite the commoditization is that a small number of enterprises figured out the right structure early, and their work is already paying off.

Walmart's internal agent platform, the one they call WIBEY in public talks, is the cleanest example I have seen. The core architectural decision they made, and this is the decision that looks smarter by the week, was to treat the harness as federated infrastructure rather than proprietary software. Teams inside Walmart are allowed to pick their own agent runtime as long as the runtime speaks Model Context Protocol for tools and A2A for agent to agent messaging. The central platform team does not write the runtime. They write the contracts and the evaluation harness and the safety layer that wraps around whatever the team picks.

That decision put Walmart in a position where last week's news is a pure upside. Every new open source harness that lands is another option their internal teams can adopt without any central platform team needing to approve or rebuild. The central team keeps owning the things that actually matter for a retailer at Walmart's scale, which are the workflows, the evaluation, the data access policies, and the catalog of tools wrapped around their systems of record.

Goldman's GS AI Platform is a slightly different version of the same bet. Goldman built infrastructure to route across models and enforce policy. They deliberately did not build a proprietary orchestration framework. They integrated with the public ones and spent their engineering budget on the parts of the stack that are specifically Goldman, which is the compliance envelope and the integration with their internal data.

The lesson from both is the same. If you architected your platform so that the harness layer is something you rent from the open source ecosystem, every open source release is free R&D. If you architected it so the harness is something you own, every open source release is a sprint tax. The architectural decision is doing more work than any individual engineering choice.

For a longer version of why this architectural decision matters at the system design level, Building Multi-Agent Systems covers the coordination patterns and isolation models that any enterprise platform has to solve regardless of which orchestrator they pick.

Rent the runtime, own the workflow#

I have been repeating this phrase in every conversation about this for about two months, and I am going to put it in the post because I think it is the cleanest summary of the playbook for enterprises in 2026.

Rent the runtime. Own the workflow.

Renting the runtime means picking an agent harness that already has ten thousand stars, an active maintainer community, a security disclosure process, and at least one production deployment at your scale or close to it. You adopt it. You do not fork it. You file pull requests when you find gaps. You benefit from everybody else in the ecosystem stress testing the same code you are running. The cost of the runtime to you is the cost of staying current with releases, and that is a much smaller cost than writing it yourself.

Owning the workflow means putting your engineering budget into the things the open source ecosystem cannot give you, because those things are specific to your business. The state machine for your domain. The evaluation harness built on your real data. The tool integrations into your systems of record. The safety and compliance layer that reflects your regulatory reality. The feedback loop from your actual users. The observability that maps cleanly onto the questions your CTO actually asks.

There is a symmetry to this split that I find clarifying. The runtime is the thing that looks different from the outside but turns out to be the same underneath. The workflow is the thing that looks the same from the outside but turns out to be different underneath. Most enterprise platform teams have been optimizing the wrong half for the last twelve months.

The memory layer deserves a specific note here because memory is where I see the next wave of enterprise mistakes being set up. Every enterprise wants long-lived agent memory. Most of them are considering building it themselves. This is the same mistake as the orchestrator mistake, one layer up. Mem0, Zep, Letta, and a handful of others already exist. They have production deployments. They have founders and maintainers who think about this problem full time. If you are planning to build a memory store from scratch in 2026, you are about twelve months late. I wrote about the general shape of the memory problem in MemPalace and the Memory Layer, and the same logic applies.

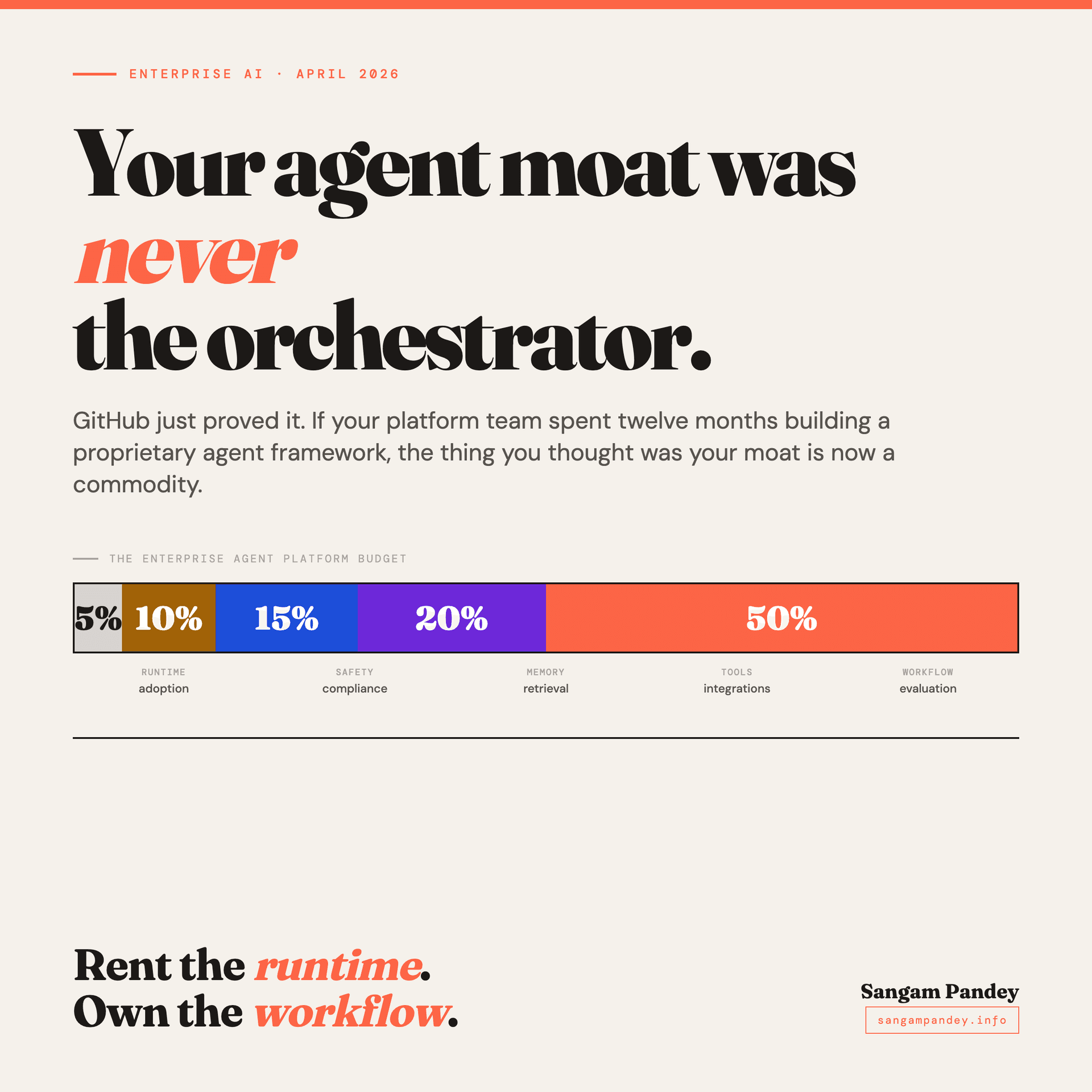

The budget table that should be on your desk Monday morning#

If you are responsible for an enterprise agent platform, here is the allocation I would argue for in any serious planning conversation this quarter.

Five percent of your platform budget goes to runtime adoption. This is the work of picking an open source harness, integrating it into your deployment environment, staying current with releases, and contributing back when you find something you want fixed. Five percent sounds low. It is low on purpose. If you are spending more than that on the runtime, you are probably reinventing something.

Ten percent goes to the safety and compliance envelope around whatever runtime you picked. This is the policy layer, the logging layer, the secrets management, the outbound request filtering, the audit trail that your risk team needs. This is real work and it is legitimate work. It is also finite work.

Fifteen percent goes to memory and retrieval. This includes adopting a memory store, integrating it with your data sources, and building the retrieval patterns that connect your agents to your enterprise data. Again, mostly adoption and integration, not raw invention.

Twenty percent goes to tools and integrations. This is the library of tool wrappers around your systems of record. Your CRM. Your ERP. Your data warehouse. Your ticketing system. This work has to be done by you because nobody else knows what a ticket looks like in your instance of your ticketing system. Every hour spent here is an hour that directly reduces the gap between what the agent can do and what your users actually need.

Fifty percent goes to workflow design and evaluation. Half of the budget. This is the number that most enterprise platform teams find surprising, because it is so much larger than what they are currently spending. This is where the differentiation actually lives. The state machines. The evaluation harness. The quality bar. The feedback loop from production. The work of figuring out what your agents should actually do and proving that they do it well.

If your current budget split is thirty percent runtime and twenty percent workflow, you are spending your money in almost exactly the reverse of what I am describing. That is the tax.

The numbers are directional, not prescriptive. The point is the ratio. Your workflow budget should dwarf your runtime budget by a factor of five or more. If it does not, something is wrong with your platform strategy.

Steelman for the people still building their own#

I want to give the strongest possible version of the counterargument, because I have heard it a few times and it deserves a real response.

The counterargument is this. Open source harnesses are designed for general cases. Your enterprise has specific requirements around data residency, air gapping, regulatory certification, integration with legacy systems, or model routing policies that no off the shelf framework supports. You built your own harness because the off the shelf ones did not meet your constraints. The commoditization event does not apply to you because the commodity product cannot do what you need.

This is a reasonable argument and it is right in perhaps ten percent of the cases I hear it applied to. Some enterprises genuinely have constraints that the open source ecosystem does not meet. A bank that has to run everything inside a specific hardened environment with a specific audit regime is not going to drop in LangGraph next week. A defense contractor with air gap requirements is not going to federate across vendor clouds. These are real constraints.

The honest answer for those cases is that you should still not be writing the harness from scratch. You should be forking an open source harness and maintaining the fork. The delta you need to apply to make LangGraph or Mastra or the Copilot SDK fit your constraints is almost certainly smaller than the delta between starting from scratch and having a working system. You keep the benefits of the upstream community for everything that does not conflict with your constraints. You only pay the tax on the parts that actually require customization. A fork is cheaper than a rewrite in every case I have seen.

The other ninety percent of the cases I hear this argument applied to are not actually constraint problems. They are preference problems. The team built a proprietary harness because they wanted to, not because they had to. The constraint story was assembled after the fact to justify the work. I say this without malice. I have done it myself on other projects. It is an easy mistake to make because proprietary work feels more important than adoption work, even when adoption is the correct decision.

What to do this week if you are stuck in the wrong layer#

If you are reading this and recognizing your own platform in the mirror, here is what I would actually do this week, in order.

Start with an honest inventory of where your engineering budget is going. Not the official allocation. The actual allocation. How many engineer-weeks in the last quarter went into runtime work versus workflow work. Be specific. Count sprints. This exercise usually takes a couple of hours and it is deeply clarifying.

Pick one open source harness and run a two week spike. The goal of the spike is not to replace your internal framework. The goal is to rebuild one of your existing agent workflows on the open source harness and see how much of your internal code was load bearing versus how much was reinventing the wheel. You will learn more from this spike than from any strategic document.

Write the honest memo about what the spike revealed. Some of what your team built is genuinely specific to your enterprise and worth keeping. Some of it is not. The memo makes the distinction visible and creates space for the next decision.

Then, and only then, have the migration conversation. Migration is hard and not every platform should migrate. But many should, and the decision is easier to make when you can point at a working spike and a real inventory.

The thing not to do is to double down on the proprietary framework out of sunk cost. The sunk cost is real. It is also in the past. The question is how your team spends the next year, not how they spent the last one.

FAQ#

Is this post saying that all proprietary agent frameworks are bad?#

No. It is saying that the majority of proprietary agent frameworks built inside enterprises in 2025 were filling a gap that the open source ecosystem has now closed. There are still cases where a proprietary framework is the right answer, specifically when regulatory or integration constraints cannot be met by forking an existing framework. Those cases are rarer than most platform teams currently assume.

What open source agent harnesses should I actually consider?#

The current shortlist most enterprises are converging on includes LangGraph for Python-first production work, Mastra for TypeScript, the Microsoft Agent Framework for organizations already in the Azure ecosystem, Dify for low-code internal tool use cases, and the Copilot SDK for teams that want the tightest GitHub integration. None of these are perfect. All of them are better starting points than a greenfield proprietary framework. The right pick depends on your existing stack and team skillset, not on which one has the best marketing page.

How should I budget between runtime and workflow work in 2026?#

A rough rule of thumb I have been recommending is to put no more than fifteen to twenty percent of your platform engineering budget into runtime, memory, and tool adoption, and at least fifty percent into workflow design, evaluation, and feedback loops. The remaining budget goes to safety, compliance, and integration work. The exact numbers matter less than the ratio. If your runtime spend is larger than your workflow spend, you are very likely in the wrong layer.

What about the memory store problem?#

Memory is the layer where I see the next wave of enterprise mistakes setting up. Several production grade open source memory stores now exist, and the same rent the runtime logic applies. If you are planning to build a long-lived agent memory system from scratch in 2026, the default recommendation is to adopt instead. The time saved goes into the workflow layer, which is where the differentiation lives.

What does rent the runtime own the workflow mean in practice?#

It means that the parts of your agent platform that are the same across every enterprise should be rented from the open source ecosystem, and the parts that are specific to your business should be where your engineering team spends its time. The orchestration loop, the tool calling surface, and the memory interface are rented. The state machines, the evaluation harness, the integrations with your systems of record, and the feedback loops from your real users are owned. The phrase is shorthand for a budget allocation, not a religious position.

Is GitHub going to kill the rest of the open source agent ecosystem?#

Probably not. GitHub tends to raise the floor for a category rather than monopolize it. The Copilot SDK is going to pull a lot of teams toward adopting any harness at all, which is a net positive for LangGraph, Mastra, and the rest of the ecosystem. The frameworks that will struggle are the ones that were solving problems GitHub now solves for free, not the ones that made different design choices. Expect consolidation in the next twelve months, not extinction.

Where does this leave the teams that did build proprietary frameworks in 2025?#

The honest answer is that most of those teams should take the spike, write the memo, and have the migration conversation I described above. Some of the work they did is transferable to the workflow layer, which is where their attention should have been in the first place. The proprietary framework itself is probably not worth preserving for its own sake. The judgment, the domain knowledge, and the understanding of what their enterprise actually needs from an agent platform are all still valuable. That judgment is easier to apply on top of a rented runtime than inside a proprietary one.

For a broader view of how the harness, memory, and workflow layers fit together across a production agent system, Scion and the Multi-Agent Harness walks through the same split at the infrastructure level and is a useful companion piece to this one.