Three engineers. An empty repository. A rule that nobody on the team writes a single line of code by hand.

Five months later, the repository contained over one million lines of production code. Roughly 1,500 pull requests had been opened and merged. Not one of them was written by a human. Not one of them received a human code review before merging.

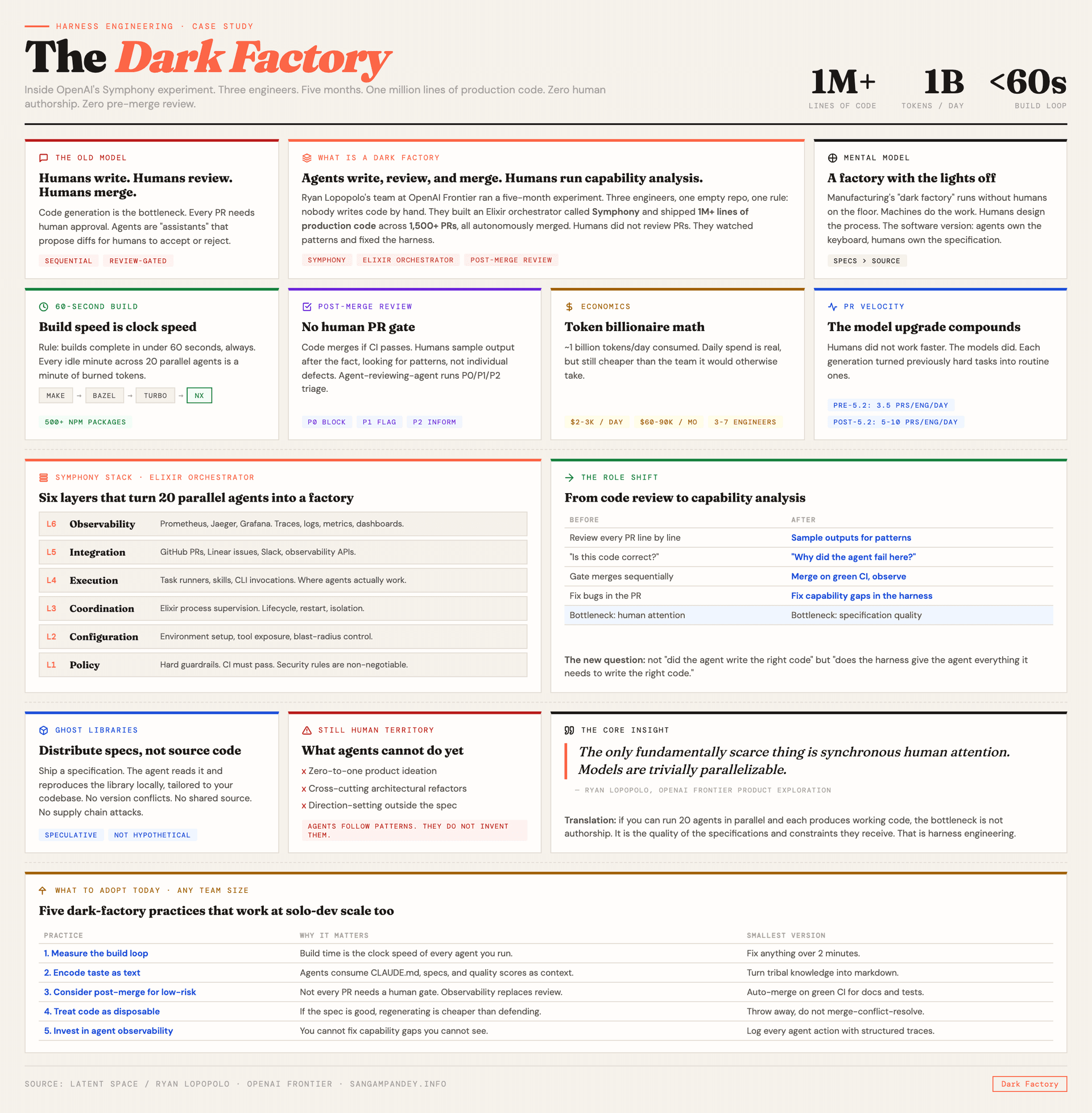

Ryan Lopopolo described this experiment on the Latent Space podcast in April 2026, and the details go far beyond what the original OpenAI blog post revealed. I wrote about the foundations of harness engineering when the first numbers came out. What Lopopolo shared on the podcast fills in the operational reality behind those numbers. The machinery, the economics, the failure modes, and a concept called "ghost libraries" that I think will change how we distribute software within the next two years.

Table of Contents#

- The dark factory concept

- Symphony and the six layer stack

- The one minute build constraint

- Post-merge review is not chaos

- A billion tokens a day

- Ghost libraries

- What agents still cannot do

- The model trajectory argument

- What this means for your team

- FAQ

The dark factory concept#

A dark factory, in manufacturing, is a facility that runs with the lights off. No humans on the floor. Machines do the work. Humans design the process, monitor the output, and intervene when something breaks.

Lopopolo's team at OpenAI ran what he calls the first dark factory for software. The agents wrote code. The agents reviewed code. The agents merged code. The humans did something else entirely. They designed specifications, built observability dashboards, and spent their time figuring out why agents struggled with certain tasks rather than doing those tasks themselves.

The key quote from the podcast is worth sitting with: "The only fundamentally scarce thing is synchronous human attention. Models are trivially parallelizable."

That sentence reframes the entire economics of software development. If you can run 20 agents in parallel and each one produces working code, the bottleneck is not authorship. The bottleneck is the quality of the specifications and constraints those agents receive. I explored a related angle in the five pillars of agentic engineering, where the first pillar is literally "context architecture." The dark factory makes that pillar load-bearing in the most literal sense.

Symphony and the six layer stack#

The orchestration system behind the experiment is called Symphony. It is written in Elixir, which is not a coincidence. Elixir's process supervision model, inherited from Erlang, is designed for managing thousands of concurrent, isolated processes. Each agent is a supervised process. If it crashes, the supervisor restarts it. If it hangs, the supervisor kills it and spawns a replacement.

Symphony has six layers:

Policy. The institutional guardrails. CI must pass. Security rules are non-negotiable. These are not suggestions. They are hard constraints that agents cannot override.

Configuration. Environment setup and tool exposure. Which CLI tools does the agent have access to? Which parts of the repository can it see? This is where you control blast radius.

Coordination. The Elixir process supervision layer. Agent lifecycle management. Starting, stopping, restarting, and routing messages between agents.

Execution. Task runners, skills, and CLI invocations. This is where the actual work happens. The agent runs a build, executes tests, opens a PR.

Integration. Connections to external systems. GitHub for PRs. Linear for issue tracking. Slack for notifications. Observability APIs for metrics.

Observability. Traces, logs, metrics, and dashboards. Prometheus for metrics. Jaeger for distributed tracing. Grafana for visualization. All deployed locally.

If you have read about Scion's approach to multi-agent orchestration, you will notice some architectural parallels. Both systems separate the agent's intelligence from its operational environment. Both treat isolation and observability as first-class concerns. The difference is that Symphony was built for a specific internal team at OpenAI, while Scion is designed to be harness-agnostic.

The skills system is particularly interesting. There are roughly six core skills, each defined in markdown. Here is the part that surprised me: the skills are self-modifying. After a session, agents reflect on their logs and update the skill definitions. The team reviews these changes as part of their post-merge sampling, but the agents are the ones proposing improvements to their own instructions.

The one minute build constraint#

This is the single most actionable insight from the entire podcast. Lopopolo's team enforced a strict rule: the build loop must complete in under one minute. Not five minutes. Not "as fast as we can get it." Under sixty seconds, always.

The reasoning is straightforward. An agent that waits three minutes for a build is an agent that is idle for three minutes. Multiply that by 20 agents running in parallel and you have an hour of wasted compute per build cycle. At a billion tokens a day, idle time is expensive.

They went through four build systems to maintain this constraint. Make, then Bazel, then Turbo, then NX. Each migration happened because the previous system could not keep build times under one minute as the codebase grew. By the end, the monorepo contained over 500 npm packages, and the build still completed in under a minute.

This has direct implications for anyone running multiple Claude Code agents in parallel. If your CI pipeline takes ten minutes, your agents are spending most of their time waiting. The build loop is not infrastructure. It is the clock speed of your entire agentic system.

Post-merge review is not chaos#

The idea of merging code without human review sounds terrifying. It sounded terrifying to me when I first read the headline. But the actual workflow is more nuanced than "yolo merge everything."

The team uses what they call post-merge review. Code gets merged if it passes CI. After merging, humans sample the output. They do not read every PR. They look at patterns. Which agents are struggling? Which types of tasks produce low-quality output? Where are the recurring failures?

This is a fundamentally different feedback loop. Pre-merge review is a gate. It catches individual problems but creates a sequential bottleneck. Post-merge review is an observatory. It catches systemic problems and removes the bottleneck entirely.

The agents also review each other's code. PR review agents assign P0, P1, and P2 priority levels to issues they find. P0 issues block the merge. P1 issues get flagged for human attention. P2 issues are informational.

Lopopolo describes his team's daily work as capability analysis, not code review. They ask: "Why did the agent fail at this task? What capability is missing? How do we add that capability to the harness?" That is a very different question from "Is this code correct?"

This mirrors what I described in long-running AI agent harness design. The shift from reviewing artifacts to monitoring systems. The agent is not a junior developer who needs line-by-line review. The agent is a system that needs observability.

A billion tokens a day#

The economics deserve their own section. Lopopolo's team consumed approximately one billion tokens per day. At current pricing, that works out to roughly $2,000 to $3,000 per day, or $60,000 to $90,000 per month.

That sounds like a lot until you compare it to the alternative. Three to seven engineers, fully loaded with salary, benefits, and infrastructure, cost significantly more than $90,000 per month. And those engineers produce nowhere near 1,500 PRs in five months while maintaining their own sanity.

The team's PR velocity tells the story. Before GPT-5.2, they averaged 3.5 PRs per engineer per day. After GPT-5.2 landed with background shell execution, that number jumped to 5 to 10 PRs per engineer per day. The humans did not work faster. The agents got better.

This is the "token billionaire" framing from the podcast title. If you are consuming a billion tokens a day, you are operating at a scale where individual token costs are irrelevant. What matters is throughput per dollar. And on that metric, the dark factory wins by a margin that makes the comparison almost unfair.

For teams thinking about the economics of agentic applications, this is the trajectory. The cost of tokens is dropping. The capability of models is increasing. The intersection of those two curves is where the dark factory becomes cheaper than a traditional team for an expanding set of tasks.

Ghost libraries#

This is the concept from the podcast that I keep thinking about. Lopopolo describes "ghost libraries" as a future where software is distributed as specifications rather than source code.

The idea is simple once you hear it. Today, you install a library by pulling source code from a registry. npm install, pip install, cargo add. You get thousands of files that you never read, bundled into your project.

In the ghost library model, you distribute a specification. The agent reads the specification and reproduces the library locally, tailored to your project's specific needs. No shared source code. No version conflicts. No supply chain attacks through compromised packages.

Lopopolo frames it this way: "A 2,000-line dependency can be reimplemented by an agent cheaper than it can be maintained by a human." If the agent can read a specification and produce a correct implementation in your preferred language, using your preferred patterns, integrated directly into your codebase, why would you pull someone else's source code?

This is obviously speculative. But it is speculative in the way that "agents can write production code" was speculative eighteen months ago. The trajectory is clear even if the timeline is not.

What agents still cannot do#

Lopopolo is honest about the boundaries. Two categories of work still require human steering.

First, zero-to-one product ideation. The agents can implement features, but they cannot decide what to build. The "what" still comes from humans. The agents handle the "how."

Second, complex cross-cutting refactorings. When a change spans architectural boundaries and requires understanding the full system's intent, agents struggle. They follow existing patterns well. They do not invent new architectural directions.

This maps to what I see in my own work with multi-agent systems. Agents are excellent at parallelizable, well-specified tasks. They are poor at tasks that require holding the full system context in mind and making judgment calls that are not captured in any specification.

The gap is narrowing. Each model generation pushes the boundary. But for now, the dark factory is a factory, not a design studio. It produces what you specify. It does not decide what should be produced.

The model trajectory argument#

One of the most striking parts of the podcast is how Lopopolo describes working across model generations. His team started with GPT-5.2, went through 5.3, and is now on 5.4.

GPT-5.2 introduced background shell execution, which let agents run non-blocking processes. This was the upgrade that forced the team to rework their build system, because agents suddenly cared about build speed in a way they had not before.

GPT-5.3 and 5.4 brought longer context windows, better reasoning, and the merging of specialized coding capability with general reasoning. GPT-5.4 has a one million token context window and computer use capabilities.

The pattern Lopopolo describes: each model generation makes previously difficult tasks routine. Tasks that required careful prompting and multiple retries with 5.2 just work on 5.4. His advice: "Do not bet against the model trajectory."

This is relevant for how you think about agentic workflow patterns. If you are designing rigid scaffolding around current model limitations, you are building infrastructure that the next model generation will make unnecessary. The Symphony team learned this directly. Rigid agent scaffolding became counterproductive as reasoning models improved. The less structure they imposed on agent decision-making, the better the output.

What this means for your team#

You do not need to be OpenAI to learn from this experiment. Here are the concrete takeaways.

Measure your build loop. If it takes more than two minutes, it is the single biggest bottleneck for agent productivity. Fix this before you fix anything else.

Encode taste as text. Lopopolo's team wrote quality score tables, reliability documents, and specification files. The agents consumed these as context. Your CLAUDE.md or AGENTS.md file is the minimum version of this. Make it specific. Make it machine-readable. If you have been following the map-not-manual approach I described earlier, this is the natural next step.

Consider post-merge for low-risk changes. Not every PR needs a human review. If your CI is comprehensive and your structural tests are good, the low-risk changes can merge automatically. Reserve your attention for the high-risk ones.

Treat code as disposable. The dark factory treats code as a byproduct, not an artifact. Merge conflicts are resolved by throwing away one version and regenerating it. If this makes you uncomfortable, ask yourself: is the code valuable, or is the specification valuable? If the specification is good enough to regenerate the code, the code itself is just a cache.

Invest in observability for agents. Logging, tracing, and metrics are not optional in a system where agents are producing output faster than humans can review it. You need to know what is happening, not after the fact, but continuously.

The dark factory is not the future for every team. But the principles behind it, fast build loops, encoded specifications, post-merge review, agent observability, are applicable at any scale. Even a solo developer running a single Claude Code instance benefits from a one-minute build and a well-structured CLAUDE.md.

The question is not whether you should run a dark factory. The question is how many of these practices you can adopt incrementally, starting now.

FAQ#

What is a dark factory in software development?#

A dark factory is a concept borrowed from manufacturing where a facility operates without human workers on the floor. In software, it refers to a development workflow where AI agents write, review, and merge code autonomously while humans focus on specifications, observability, and capability analysis. OpenAI's internal experiment demonstrated this approach by producing over one million lines of code with zero human-written source.

How much does it cost to run a dark factory?#

OpenAI's team consumed approximately one billion tokens per day, costing roughly $2,000 to $3,000 daily or $60,000 to $90,000 per month. This is significantly less than the fully loaded cost of the engineering team that would otherwise be needed to produce equivalent output. The economics improve with each model generation as capability increases and per-token costs decrease.

What is Symphony in the context of harness engineering?#

Symphony is an Elixir-based agent orchestration system built by OpenAI's Frontier Product Exploration team. It has six layers: policy, configuration, coordination, execution, integration, and observability. Elixir was chosen for its process supervision model, which enables managing thousands of concurrent agent processes with automatic restart and failure isolation.

What are ghost libraries?#

Ghost libraries are a speculative concept where software dependencies are distributed as specifications rather than source code. Instead of installing a package from npm or PyPI, an AI agent reads the specification and reproduces the implementation locally, tailored to your project. This eliminates version conflicts and supply chain attack vectors, though the concept is still in its early stages.

Why is the one minute build constraint so important?#

Every minute an agent spends waiting for a build is a minute of idle compute across all parallel agents. If you run 20 agents and each waits three minutes per build, that is an hour of wasted time per cycle. OpenAI's team went through four build systems (Make, Bazel, Turbo, NX) specifically to maintain sub-minute builds. For any team running agentic workflows, build speed is the clock speed of the entire system.

What is the difference between pre-merge and post-merge code review?#

Pre-merge review requires a human to approve every pull request before it is merged. This creates a sequential bottleneck. Post-merge review lets agents merge code after passing CI, and humans sample the output afterward to identify systemic patterns and capability gaps. It trades individual gatekeeping for systemic observability.

Can small teams use dark factory principles?#

Yes. The core practices, fast build loops, encoded specifications, agent observability, and treating code as disposable, apply at any team size. A solo developer benefits from a sub-minute build and a well-structured CLAUDE.md. The scale changes, but the principles remain the same.